Multimodal Artificial Intelligence represents a significant paradigm shift in how digital systems process information, moving away from isolated data streams toward a unified understanding of human communication. In the context of Instructional Design, multimodal AI is defined as a system capable of interpreting, synthesizing, and generating multiple data types—including text, images, audio, and video—within a singular architectural framework. Unlike traditional unimodal AI, which operates within the silos of a single format, multimodal systems mimic the complexity of human cognition by correlating different inputs to produce contextually aware and highly accurate outputs. For learning professionals, this technology offers a bridge between technological efficiency and the natural, multi-sensory way in which humans acquire knowledge.

The emergence of multimodal AI is not merely a technical upgrade but a fundamental change in the toolkit available to Learning and Development (L&D) professionals. By integrating diverse data formats, these models can analyze a training video, cross-reference its audio with a transcript, and interpret the visual cues of the presenter simultaneously. This holistic approach allows for a deeper level of pattern recognition, enabling the creation of smarter, more adaptive learning environments that were previously impossible with text-only or image-only software.

The Technological Evolution: From Unimodal to Multimodal Systems

The trajectory of Artificial Intelligence in education has followed a clear chronological path. In the early 2010s, AI applications in learning were largely unimodal, focusing on narrow tasks such as automated grading of multiple-choice questions or basic text-based search functions. By the mid-2010s, Natural Language Processing (NLP) advanced significantly, leading to the rise of chatbots and automated translation tools. However, these systems remained limited; they could "read" text but were "blind" to the context provided by accompanying visuals or the tone of a voice.

The breakthrough occurred in the early 2020s with the development of transformer models and large-scale neural networks. The release of models like GPT-4 and Google’s Gemini marked the transition into the multimodal era. These systems are trained on massive datasets where text is paired with images and videos, allowing the AI to learn the relationship between a written description and a visual representation. For Instructional Designers, this evolution means moving from "assembling" content pieces to "architecting" integrated experiences. The timeline of this development suggests that we are currently in an acceleration phase, where multimodal capabilities are being integrated into standard authoring tools and Learning Management Systems (LMS).

Mechanics of Multimodal Machine Learning

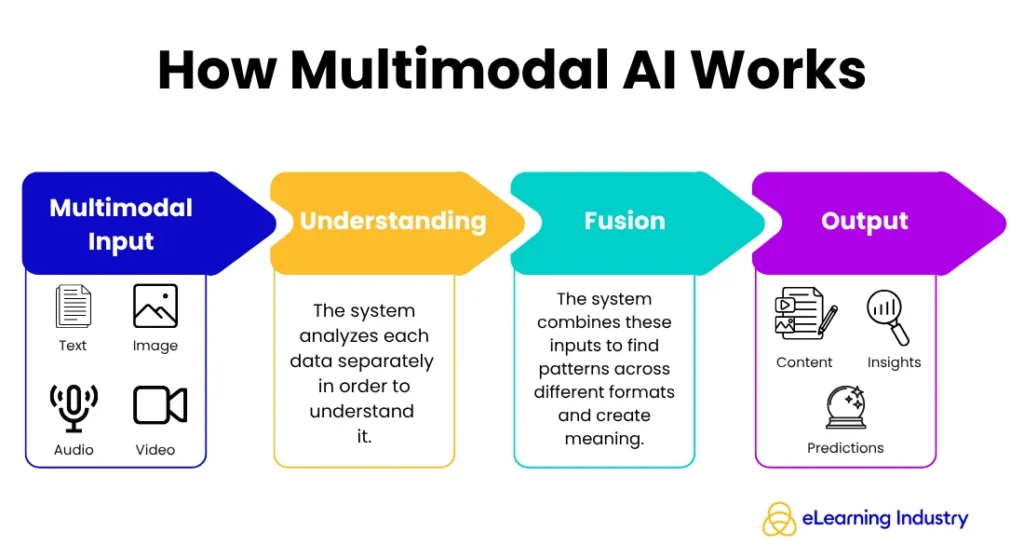

To understand how multimodal AI functions, one must examine the underlying process of multimodal machine learning. This process follows a structured flow: input, understanding, connection, and output. When a system receives multimodal data—such as a webinar recording—it performs "feature extraction." It identifies the keywords in the speech, the objects in the video frames, and the sentiment in the speaker’s tone.

The critical differentiator is the "fusion" stage. This is where the AI aligns the different data types to ensure they are consistent. If a speaker says the word "cylinder" while holding a square object, a multimodal model detects the discrepancy, whereas a unimodal model would only register the spoken word. This level of sophisticated understanding allows for the generation of "richer outputs," such as automatically generating a summary that includes both the spoken points and the visual diagrams presented during a session. For Instructional Designers, this ensures that the content produced is not only accurate but contextually synchronized across all media types.

High-Impact Use Cases in Modern Instructional Design

The application of multimodal AI in the corporate and educational sectors is driving measurable improvements in efficiency and engagement. Industry data suggests that organizations leveraging AI-integrated workflows can reduce content production time by 30% to 50%. This efficiency is realized through several high-impact use cases.

Streamlined Content Production and Repurposing

Traditionally, creating a comprehensive eLearning module required separate workflows for scriptwriting, graphic design, and video editing. Multimodal generative AI collapses these silos. A single prompt or a raw document can be transformed into a narrated video with relevant imagery and interactive assessments. This capability allows small L&D teams to scale their output without a proportional increase in headcount or budget. Furthermore, it facilitates "content atomization," where a single high-quality video can be automatically broken down into text-based guides, audio podcasts, and visual infographics, catering to different learner preferences.

Adaptive and Personalized Learning Paths

Multimodal data provides a more granular view of learner behavior. Standard analytics often focus on completion rates and quiz scores. In contrast, a multimodal system can analyze engagement signals across formats—such as how a learner interacts with a simulation, which parts of a video they replay, and the sentiment expressed in their open-ended responses. By combining these "multimodal features," the AI can dynamically adjust the learning journey. If a learner struggles with a text-based explanation but excels during visual simulations, the system can pivot to prioritize visual content, thereby increasing the probability of mastery.

Immersive Simulations and Real-Time Feedback

In fields such as leadership training or medical education, immersive simulations are essential. Multimodal AI powers these environments by enabling "conversational AI" that can interpret both the words a learner speaks and the visual cues they provide in a virtual environment. This leads to realistic, branching scenarios where the AI provides instant, nuanced feedback. Instead of a simple "correct" or "incorrect" response, the system can explain how the learner’s tone of voice or choice of words might impact the outcome of a high-stakes conversation.

Accessibility and the Inclusion Mandate

One of the most significant implications of multimodal AI is its role in advancing Universal Design for Learning (UDL). Accessibility is no longer an afterthought but a built-in feature of the multimodal framework. AI models can automatically generate high-quality alt-text for images, provide real-time captions for audio, and translate content into dozens of languages while maintaining the original tone and context.

According to the World Health Organization, over 1 billion people live with some form of disability. In the workplace, ensuring that training is accessible is both a legal requirement and a moral imperative. Multimodal AI allows Instructional Designers to "design once and deliver everywhere," ensuring that a blind learner can access the same information via high-quality audio descriptions that a sighted learner receives through video. This flexibility also benefits learners in different environments, such as a field technician who needs to listen to a lesson via audio while their hands are busy, or an office worker who prefers reading text in a quiet environment.

Data Analytics: Moving Beyond Surface Metrics

The integration of multimodal data is revolutionizing learning analytics. Historically, L&D departments have struggled to prove the Return on Investment (ROI) of their programs due to "thin" data. Multimodal systems provide "thick" data by aggregating inputs from multiple sources.

For instance, by analyzing voice interactions in a role-play exercise alongside LMS progress data, organizations can identify "confidence-competence" gaps. A learner might answer a question correctly (competence) but hesitate significantly or use uncertain language in their voice response (lack of confidence). Identifying these nuances allows Instructional Designers to target interventions more precisely, moving from generic training to surgical skill-building. This data-driven approach shifts the role of the designer from a content creator to a performance consultant who uses integrated data to solve specific business problems.

Strategic Implementation for Instructional Designers

For professionals looking to adopt multimodal AI, the transition should be strategic rather than reactive. Industry experts suggest a four-step framework for integration:

- Audit Existing Assets: Review current course libraries to identify multimodal data that is currently underutilized. Many organizations possess vast amounts of video and audio content that can be fed into multimodal models to generate new, updated learning materials.

- Select Targeted Tools: Rather than adopting every new AI tool, designers should focus on platforms that offer "cross-modal" capabilities—those that can handle text-to-video or speech-to-text-to-image workflows seamlessly.

- Redesign for Experience: The focus must shift from "what content do I need to build?" to "what experience will lead to the best outcome?" Designers should leverage AI to handle the rote tasks of content assembly, allowing them to spend more time on the cognitive architecture of the course.

- Establish New Success Metrics: Move beyond completion rates to measure "time to proficiency" and "behavioral change." Multimodal data provides the evidence needed to track these deeper metrics.

Broader Impact and Future Implications

The rise of multimodal AI is fundamentally altering the professional landscape for Instructional Designers. As AI takes over the mechanical aspects of production, the value of the human designer shifts toward empathy, ethical oversight, and high-level strategy. There is a growing consensus among industry leaders that the "Instructional Designer of the Future" will be an AI orchestrator—someone who understands how to prompt, refine, and connect various AI models to create a cohesive learning ecosystem.

However, this transition also brings challenges. The use of multimodal data raises significant questions regarding data privacy and bias. Because these models learn from vast amounts of internet data, they can inadvertently replicate social biases in their visual or text outputs. Instructional Designers must act as the "human in the loop," auditing AI-generated content for accuracy, inclusivity, and brand alignment.

In conclusion, multimodal AI is not merely a trend but a foundational shift in the technological landscape. By aligning digital systems with the way the human brain naturally processes information—through a combination of sight, sound, and language—this technology enables more effective, accessible, and personalized learning. For the field of Instructional Design, the opportunity lies in moving past the limitations of traditional media to create truly integrated, data-informed experiences that drive real-world performance.