Artificial intelligence is no longer a theoretical concept for employers; it has firmly embedded itself within the intricate fabric of the hiring process, often without explicit acknowledgment from the organizational side. While job candidates are rapidly leveraging sophisticated AI tools to craft compelling resumes, prepare for rigorous interviews, and streamline application submissions, many organizations find themselves mired in internal deliberations, navigating complex governance committees, stringent compliance reviews, and the formidable challenge of preparing vast internal datasets for AI integration. This disparity has forged a widening chasm between the AI capabilities readily available to job seekers and the preparedness of hiring teams to manage and harness these advancements responsibly, thereby introducing a significant and escalating array of risks.

The Proliferation of AI in the Talent Landscape

The current era marks a pivotal shift in how talent interacts with the job market. The democratization of powerful AI tools, particularly generative AI, has empowered candidates like never before. From AI-powered resume builders that optimize keywords for applicant tracking systems (ATS) to virtual interview coaches that simulate common questions and provide real-time feedback on delivery, candidates are equipping themselves with a formidable technological advantage. A recent industry survey, for instance, indicated that over 60% of job seekers under 35 have utilized AI to assist with at least one aspect of their job application process in the past year, a figure projected to rise exponentially. This reflects a broader societal trend where AI is becoming an indispensable utility for personal and professional advancement.

Conversely, many corporate human resources (HR) and talent acquisition (TA) departments, despite recognizing the transformative potential of AI, are still grappling with the foundational elements of its adoption. The journey of AI from early automation in the 2000s (e.g., basic ATS filtering) to the sophisticated predictive analytics and generative capabilities of today has been swift. While early AI applications focused on efficiency in administrative tasks, modern AI promises to revolutionize strategic functions like talent matching, retention prediction, and even workforce planning. However, the rapid pace of innovation has outstripped the capacity of many organizations to integrate these tools effectively and ethically.

The Organizational Lag: A Deeper Dive into Governance and Data Readiness

The central dilemma, as articulated by Jason Putnam, CEO of Vetty, often manifests as a classic chicken-or-egg scenario: should companies wait for fully defined governance structures before building AI capabilities, or should they forge ahead while these frameworks evolve? Research from prominent advisory firms like Kyle & Co. unequivocally suggests the latter, framing AI not as an entirely new beast, but "simply the next arena" of risk management. HR and TA professionals are inherently accustomed to operating within high-impact, highly regulated environments, managing everything from labor laws to privacy standards. The current challenge is not the novelty of risk, but the urgency of accelerating governance skills and operational processes specifically tailored for responsible AI deployment.

The reluctance to move forward stems from legitimate concerns. Preparing internal data for AI is a monumental task, often requiring extensive cleaning, standardization, and anonymization to ensure fairness and prevent bias. Compliance reviews for AI tools are complex, necessitating legal expertise to navigate emerging regulations around automated decision-making, data privacy (like GDPR and CCPA), and anti-discrimination laws. Furthermore, establishing robust governance committees to oversee AI strategy, ethics, and implementation demands cross-functional collaboration and a deep understanding of both technological capabilities and organizational values. The sheer scale and complexity of these preparatory steps often lead to inertia, inadvertently widening the gap between candidate and employer AI proficiency.

Unpacking the Risks of AI Illiteracy: Beyond Inefficiency

Waiting too long to cultivate internal AI capabilities carries severe consequences that extend far beyond mere operational inefficiency. One critical impact is rising candidate abandonment rates. In an age where job seekers expect rapid, seamless interactions, slow, manual processes driven by outdated HR tech stacks can deter top talent. If candidates are using AI to apply for dozens of jobs quickly, and an employer’s process takes weeks for initial screening, highly sought-after individuals will simply move on to more agile organizations. This directly impacts talent pipelines and an organization’s ability to compete for skilled labor.

Furthermore, the lack of employer AI savviness introduces growing vulnerability to fraud. With sophisticated AI, candidates can generate highly convincing fake credentials, manipulate employment histories, or even deploy AI personas for initial screening stages. Without AI-powered verification systems or adequately trained human oversight, organizations risk onboarding individuals based on fabricated information, leading to potential security breaches, reputational damage, and financial losses. The ethical implications are equally profound. If HR teams are unaware of the embedded AI in their systems or how it makes decisions, they cannot effectively audit for bias, ensure fairness, or maintain transparency, opening the door to discrimination lawsuits and eroded employee trust.

The Imperative for Proactive Governance and Awareness

The first and most critical step toward responsible AI adoption is awareness. In many enterprises, AI capabilities are already present, often stealthily embedded within existing software tools (e.g., in an ATS for resume parsing, or in an assessment platform for candidate scoring), or informally utilized by specific teams to enhance productivity. However, HR and TA leaders frequently lack comprehensive visibility into where these capabilities reside and how they subtly influence critical decision-making processes. This lack of transparency is a significant vulnerability.

Effective AI governance, therefore, begins with robust cross-functional alignment. HR, Talent Acquisition, IT, Legal, Compliance, and Procurement must all converge to define how AI systems operate within the organization, establish clear ethical guidelines, and determine how human judgment is meticulously incorporated into automated processes. This collaboration ensures that clear lines of responsibility are drawn, helping organizations strike the delicate and necessary balance between automation’s efficiency and human oversight’s accountability. While technology can undoubtedly accelerate workflows, the ultimate accountability for hiring decisions, particularly those impacting individuals’ livelihoods, must remain firmly human. Industry analysts emphasize that a fragmented approach, where each department develops its own AI strategy in isolation, inevitably leads to inconsistencies, inefficiencies, and heightened risk exposure.

Strategic Application: Augmenting Human Expertise, Not Replacing It

The most effective application of AI in HR occurs when it complements and enhances human expertise rather than attempting to replace it entirely. As Jason Putnam highlights, consider the complex domain of background screening. Determining the precise criteria required for a specific role, deciding which credentials absolutely must be verified, understanding the relevance of various qualifications, and navigating the labyrinthine compliance requirements are fundamentally human decisions, deeply informed by experience, regulatory knowledge, and strategic insight. These are tasks that AI, despite its sophistication, cannot ethically or effectively perform without human guidance.

However, once these foundational criteria are meticulously established by human experts, AI can then be powerfully deployed to automate laborious validation tasks. This includes continuously monitoring professional licenses for expiration or disciplinary actions, flagging inconsistencies in submitted documentation, or rapidly cross-referencing information against vast public and private databases. When this interdependent approach is correctly applied, it dramatically reduces administrative burden, accelerates the verification process, and simultaneously strengthens verification standards. In this model, human judgment defines the rules and ethical boundaries, while technology acts as an efficient, tireless enforcer. Together, they create a robust system that mitigates risk, ensures accuracy, and significantly speeds up the hiring process without compromising quality or compliance. This collaborative paradigm fosters trust, improves candidate experience, and frees up HR professionals to focus on more strategic, human-centric aspects of their roles.

Navigating the Complex HR Tech Stack and Evolving Legislation

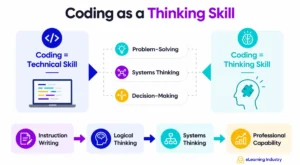

Applying this synergistic model consistently across an organization necessitates a broader level of AI literacy, extending across the entire HR technology stack. AI literacy is rapidly becoming a fundamental requirement for managing modern HR technology, which is increasingly infused with AI capabilities. Organizations must contend with an evolving patchwork of legislation governing background checks, stringent privacy standards, and complex rules around automated decision-making. Simultaneously, their HR systems – from applicant tracking platforms and assessment tools to onboarding software – are themselves integrating diverse AI functionalities.

The challenge intensifies when these disparate systems operate at varying levels of AI maturity. Candidates may be utilizing cutting-edge generative AI tools for their applications, while employers are managing a heterogeneous mix of technologies with inconsistent levels of automation, oversight, and ethical safeguards. Without a coherent, overarching strategy and a unified understanding of AI’s presence and impact across these tools, this imbalance inevitably creates significant operational inefficiencies and substantial compliance risks. The fragmentation can lead to inconsistent candidate experiences, potential biases in screening, and a lack of clear audit trails, making it difficult to demonstrate regulatory compliance.

A Phased Approach to AI Adoption: Building Capabilities Incrementally

The question then becomes how to build this essential AI capability without unduly overcomplicating an already intricate process. The analysts at Kyle & Co. advocate for a practical, incremental approach: initiate with smaller, manageable AI use cases before scaling up to more complex applications. Organizations are not expected to solve enterprise-wide AI challenges overnight. A single pilot program focused on one measurable Key Performance Indicator (KPI), or the automation of one specific functional workflow, can serve as a robust foundation for broader adoption. This iterative strategy allows organizations to learn, adapt, and refine their approach.

AI literacy, like any complex skill, grows through practical experience. Each successful implementation not only strengthens internal governance frameworks but also deepens the organization’s understanding of the technology’s capabilities and limitations. This experiential learning is invaluable for informing the subsequent development of a comprehensive, long-term AI playbook that aligns with the organization’s strategic objectives and ethical commitments.

To move forward effectively, organizations should prioritize several key actions:

- Conduct a comprehensive AI Audit: Identify where AI is currently embedded within existing tools and where it is being used informally by teams. Gain full visibility into the current AI footprint.

- Establish Clear Governance Policies: Develop robust ethical guidelines, data privacy protocols, and accountability frameworks for all AI applications, ensuring human oversight remains paramount.

- Invest in HR/TA Upskilling: Provide targeted training for HR and TA professionals on AI principles, ethical considerations, bias detection, and how to effectively leverage AI tools.

- Foster Cross-Functional Collaboration: Create dedicated working groups involving HR, IT, Legal, Compliance, and Procurement to develop and implement AI strategies collaboratively.

- Pilot Strategic Use Cases: Begin with small, well-defined AI projects that address specific pain points and offer measurable benefits, such as automating initial resume screening or enhancing candidate communication.

- Develop an AI Playbook: Document best practices, lessons learned, and guidelines for future AI implementations, creating a living document that evolves with the technology.

The Future of HR: Adapting for Enduring Competitive Advantage

Ultimately, the organizations that proactively address these priorities now will be best positioned to not only keep pace with the accelerating technological landscape but also to thrive within it. What organizations absolutely cannot afford is to wait. AI is already fundamentally reshaping how candidates approach hiring, influencing everything from their initial application strategy to their interview preparation. Employers that delay in building their own robust AI capabilities risk falling significantly behind, not merely in terms of operational efficiency and cost-effectiveness, but critically, in establishing trust with their workforce, ensuring regulatory compliance, and maintaining a vital competitive advantage in the global talent market.

In the dynamic realm of hiring, as in most complex organizational systems, the entities that consistently succeed are those that demonstrate an early capacity for adaptation and cultivate the operational discipline necessary to manage profound technological and cultural change. Jason Putnam, with his extensive background in SaaS and HR technology, continues to advocate for a forward-thinking approach, emphasizing that Vetty’s mission to transform how organizations hire – faster, smarter, and with greater confidence – is inextricably linked to the responsible and strategic adoption of AI. His vision underscores that trust, innovation, and strategic execution are not just buzzwords, but the essential pillars upon which the future of talent acquisition will be built.