New polling from Lenovo suggests that the widespread, and often unregulated, use of artificial intelligence in organizations is creating operational risks, increasing costs, and slowing the return on investment from AI initiatives. The company’s latest Work Reborn Report, based on a survey of 6,000 employees worldwide, claims that more than 70 percent of employees now use AI tools on a weekly basis. Up to a third of this activity is taking place without formal oversight from IT departments, contributing to the growth of so-called shadow AI.

The findings point to a widening gap between the adoption of AI and organizations’ ability to manage it effectively. While 80 percent of respondents expect to increase their reliance on AI over the next year, governance and control mechanisms are not keeping pace. This has implications beyond IT functions, particularly for security leaders who must manage expanding risks across devices, endpoints, and data flows.

According to the report, 61 percent of IT leaders have already seen an increase in cybersecurity threats linked to AI. However, only 31 percent say they feel confident in their ability to manage these risks. Concerns are also reflected among employees, with 43 percent expressing worries about data exposure or AI-related attacks.

Lenovo argues that the lack of oversight is already affecting business performance. Fragmented adoption of AI across teams is associated with delayed returns, duplicated spending on overlapping tools, and limited visibility into which systems are delivering value. The report also highlights uneven access to AI across the workforce, with some employees operating within managed environments while others rely on unapproved tools to maintain productivity. This disparity is described as contributing to a "two-speed" workforce, potentially slowing decision-making and increasing inefficiencies.

The research suggests that many organizations are attempting to manage AI across disconnected systems, with devices, infrastructure, and security handled separately. This fragmentation is identified as a key factor behind the gap between AI usage and effective execution.

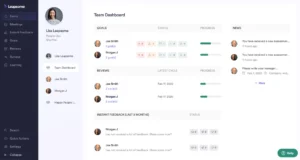

Lenovo’s report outlines an alternative approach centered on integrating device management, infrastructure, and security into a single operating model. It argues that embedding control at the device level, combined with ongoing managed security services, could help organizations reduce complexity and improve oversight.

The findings reflect broader concerns about how organizations govern emerging technologies, particularly as adoption accelerates faster than internal systems and policies can adapt.

The Rise of Shadow AI: A Growing Concern for Businesses

The rapid proliferation of artificial intelligence (AI) tools within the global workforce is presenting a double-edged sword for businesses. While the potential for AI to enhance productivity, drive innovation, and streamline operations is widely acknowledged, a significant challenge is emerging: the uncontrolled and often unmonitored use of these powerful technologies. Lenovo’s recent Work Reborn Report, published on April 27, 2026, sheds critical light on this burgeoning issue, revealing that a substantial portion of AI adoption is occurring outside the purview of IT departments, a phenomenon often referred to as "shadow AI."

The report, which surveyed 6,000 employees across the globe, found that over 70 percent of individuals are now utilizing AI tools on a weekly basis. More alarmingly, nearly one-third of this AI activity is happening without any formal oversight from IT departments. This lack of centralized governance creates a fertile ground for a range of operational and security risks, potentially undermining the very benefits organizations hope to achieve through AI adoption.

Escalating Risks in the AI-Driven Workplace

The implications of this "shadow AI" trend extend far beyond mere inefficiency. Cybersecurity leaders are facing an increasingly complex threat landscape as AI tools, often implemented without proper vetting, become new vectors for attacks. The Lenovo report indicates that a significant 61 percent of IT leaders have already witnessed an uptick in cybersecurity threats directly linked to AI. Despite this heightened awareness, a concerningly low 31 percent of these leaders express confidence in their ability to effectively manage these AI-related risks.

This confidence gap is mirrored by employee sentiment. A considerable 43 percent of the surveyed employees voiced concerns about potential data exposure or AI-driven cyberattacks. This suggests a growing unease among the workforce regarding the security implications of the tools they are increasingly relying upon. The inherent nature of many AI tools, which often require access to sensitive organizational data to function effectively, exacerbates these concerns. Without robust security protocols and clear usage guidelines, the risk of data breaches, intellectual property theft, and unauthorized access to confidential information is significantly amplified.

Financial and Operational Ramifications of Unmanaged AI

Beyond cybersecurity, the uncontrolled adoption of AI is also contributing to tangible financial and operational drawbacks. The report highlights that the fragmented implementation of AI across different teams leads to delayed returns on investment. This delay can be attributed to several factors, including the duplication of efforts and spending on overlapping AI tools that serve similar purposes. When departments independently procure and implement AI solutions, there is a distinct lack of visibility into which systems are truly delivering value and which are merely redundant.

Furthermore, the report points to an uneven distribution of AI access across the workforce. Some employees operate within sanctioned and managed AI environments, benefiting from IT-supported tools and training. Conversely, others are forced to rely on unapproved, often free or low-cost, AI applications to maintain their productivity and meet their job demands. This disparity creates what the report terms a "two-speed" workforce, where those with access to advanced, integrated AI tools can operate with greater efficiency and effectiveness than their counterparts. This can lead to increased inefficiencies, slowed decision-making processes, and a potential widening of the skills gap within the organization.

The Challenge of Fragmented Infrastructure

A core challenge identified by Lenovo’s research is the way many organizations are attempting to manage AI. The current approach often involves treating devices, underlying infrastructure, and security measures as separate entities. This siloed management strategy is fundamentally ill-suited to the integrated nature of modern AI deployments. As AI tools become more sophisticated and interconnected, managing them within a fragmented ecosystem becomes increasingly complex and prone to oversight failures. This disconnect is a significant contributor to the gap between the widespread adoption of AI and the organization’s ability to execute its AI strategy effectively and securely.

A Proposed Solution: Integrated Management and Device-Level Control

In response to these pressing challenges, Lenovo’s Work Reborn Report proposes a paradigm shift in how organizations approach AI governance. The report advocates for a unified operating model that seamlessly integrates device management, infrastructure, and security. The core of this proposed solution lies in embedding control mechanisms directly at the device level. By ensuring that AI usage is governed and monitored from the endpoint outwards, organizations can gain a far greater degree of oversight and mitigate many of the risks associated with shadow AI.

This approach, coupled with ongoing managed security services, is presented as a robust strategy to reduce complexity and enhance control. When device management, infrastructure, and security are holistically managed, organizations can implement standardized AI policies, enforce security protocols uniformly, and gain clearer visibility into AI usage and its impact. This integrated approach promises to streamline AI adoption, optimize resource allocation, and ultimately accelerate the realization of AI’s transformative potential.

Broader Implications for Digital Transformation

The findings of the Lenovo report resonate with a wider industry discussion about the governance of rapidly evolving technologies. As businesses increasingly embrace digital transformation, the speed at which new tools and platforms are adopted often outpaces the development of internal policies, governance frameworks, and the necessary technical infrastructure to manage them effectively. This creates a recurring challenge: how to harness the power of innovation without succumbing to the risks of unchecked implementation.

The acceleration of AI adoption, in particular, has placed immense pressure on IT departments and security teams. The ease with which employees can access and utilize powerful AI tools, often without requiring significant technical expertise, has democratized AI but also decentralized its management. This decentralization necessitates a more agile and adaptable governance model than traditional IT frameworks might offer.

Organizations that fail to address the issue of uncontrolled AI usage risk not only increased security vulnerabilities and financial inefficiencies but also a potential erosion of trust between employees and IT departments. A proactive, integrated approach to AI governance, as suggested by Lenovo, is becoming not just a best practice but a critical imperative for any organization seeking to navigate the complexities of the modern digital landscape and unlock the full, responsible potential of artificial intelligence.

Expert Perspectives and Industry Reactions (Inferred)

While the Lenovo report offers a clear perspective, industry analysts and cybersecurity experts have echoed similar concerns. Dr. Anya Sharma, a leading AI ethicist, commented, "The findings underscore a critical juncture in AI adoption. Organizations are so eager to reap the benefits of AI that they are often overlooking the foundational requirement of robust governance. This ‘move fast and break things’ mentality, while sometimes effective for innovation, can be disastrous when applied to technologies with such far-reaching implications for data security and operational integrity."

Similarly, cybersecurity firm, SecureNet Solutions, released a statement on April 28, 2026, noting, "We are seeing a significant rise in AI-assisted cyberattacks, ranging from sophisticated phishing campaigns to the exploitation of vulnerabilities in unmanaged AI applications. The Lenovo report’s data on IT leaders’ lack of confidence in managing AI risks is a direct reflection of the challenges our clients are facing. Proactive, integrated security strategies that encompass device, network, and data protection are no longer optional but essential."

A Timeline of AI Governance Evolution

The current concerns around shadow AI are not sudden but represent an evolution in how organizations grapple with technological integration.

- Early 2020s: Initial widespread adoption of generative AI tools by individuals, often for personal productivity. IT departments begin to notice increased usage of cloud-based AI services.

- 2023-2024: Growing awareness of "shadow IT" expanding into AI. Reports emerge of employees using AI for work-related tasks without explicit organizational approval. Cybersecurity incidents linked to AI begin to be more frequently documented.

- 2025: Lenovo’s initial research may have begun to flag the scale of the issue, leading to the comprehensive Work Reborn Report. Industry bodies start developing guidelines and best practices for AI governance.

- April 2026: Publication of the Lenovo Work Reborn Report, bringing widespread attention to the risks and financial implications of uncontrolled AI usage. This serves as a catalyst for organizations to reassess their AI strategies and implement more robust governance frameworks.

- Late 2026 Onwards: Expected increase in investment in integrated AI management platforms, managed security services for AI, and employee training programs focused on responsible AI use. Focus shifts from simply adopting AI to strategically and securely integrating it into core business operations.

Conclusion: The Path Forward for Responsible AI Integration

The uncontrolled use of AI in organizations is no longer a theoretical concern; it is a tangible reality with significant implications for risk, cost, and return on investment. Lenovo’s Work Reborn Report provides crucial data and insights into this evolving landscape. As businesses continue to embrace the transformative power of artificial intelligence, a strategic and integrated approach to governance, security, and management is paramount. By embedding control at the device level, fostering collaboration between IT and business units, and prioritizing employee education, organizations can mitigate the risks of shadow AI and ensure that their AI initiatives drive sustainable growth and innovation, rather than becoming a source of unforeseen challenges. The future of AI in the workplace hinges on the ability of organizations to balance rapid adoption with diligent oversight, transforming potential pitfalls into pathways for secure and efficient progress.