A groundbreaking study released by the BBC and the European Broadcasting Union (EBU) has exposed significant shortcomings in the accuracy of artificial intelligence (AI) news assistants, revealing that nearly half of all queries posed to popular platforms result in erroneous information. The research, which analyzed interactions with AI models including ChatGPT, Microsoft Copilot, Google Gemini, and Perplexity, found that approximately 45% of news-related queries generated incorrect, misleading, or outdated responses. This revelation casts a stark light on the potential pitfalls of relying on AI for critical information, particularly in the rapidly evolving landscape of news and factual reporting.

The study, published on [Insert Date of Publication, e.g., October 26, 2025], involved a rigorous evaluation of AI performance across a diverse range of news topics. The findings suggest that the seemingly "dangerously self-confident" nature of these AI systems belies a fundamental weakness: their susceptibility to inaccuracies embedded within their training data. These large language models (LLMs), which process vast amounts of information from the internet to generate responses, are prone to incorporating faulty, exaggerated, outdated, or entirely incorrect data. This "poisoned corpus" problem, as described by researchers, can lead to the propagation of misinformation, even when users believe they are receiving authoritative answers.

Key Findings: A Troubling Landscape of AI Inaccuracy

The BBC and EBU study meticulously documented instances of AI misrepresentation, highlighting the alarming nature of the errors encountered. The research team observed AI models struggling with even seemingly straightforward factual questions. For example, the AI systems were found to incorrectly identify the current Pope and the Chancellor of Germany on multiple occasions.

More concerning were the errors related to public health and legal matters. In response to a user’s query about concerns regarding bird flu, Microsoft Copilot erroneously stated that "a vaccine trial is underway in Oxford." This information was traced back to a BBC article published nearly two decades prior, in 2006, illustrating the AI’s failure to discern the temporal relevance of its sources.

The study also highlighted critical legal inaccuracies. Perplexity, in one instance, incorrectly asserted that surrogacy "is prohibited by law" in the Czech Republic, when in fact, the practice is not explicitly regulated and falls into a legal grey area. Similarly, Google Gemini misrepresented a change in UK law concerning disposable vapes, claiming it would become illegal to buy them, when the actual legislation targeted the sale and supply of such products. These examples underscore the potential for AI-generated misinformation to have significant real-world consequences, impacting public understanding of health advisories, legal frameworks, and governmental policies.

The Root Cause: The "Poisoned Corpus" Problem

Researchers attribute these widespread inaccuracies to the fundamental architecture of LLMs. These AI systems operate by creating complex mathematical models, known as "embeddings," that map the statistical relationships between tokens, or word fragments. During their training, LLMs ingest vast datasets, often encompassing a significant portion of the internet. This process results in a massive network of interconnected vectors, where every word is related to every other word based on its statistical prevalence and context within the training data.

When a user poses a question, the LLM decodes the query and searches this multi-dimensional formula for the most statistically probable answer. However, because most questions are complex and draw from numerous sources, any flawed, outdated, exaggerated, or incorrect information present in the training data can be incorporated into the final response. This probabilistic approach, while powerful for generating creative text and summarizing information, can inadvertently amplify existing biases and inaccuracies within the dataset, leading to the "dangerously confident" yet factually erroneous outputs observed in the study.

The inherent challenge lies in the sheer scale of the data used for training. Even a small percentage of flawed or outdated information within a corpus the size of the internet can have a cascading effect, leading to a high error rate in AI-generated responses to broad or complex queries. This issue has been acknowledged by AI developers themselves. In a candid discussion with Claude, another prominent AI model, the system admitted that the "poisoned corpus" problem is a significant and ongoing challenge for the industry.

Implications for Users and the Future of Information

The implications of the BBC and EBU study are far-reaching, particularly as AI systems become increasingly integrated into daily life and professional workflows. With a substantial portion of AI usage now dedicated to analysis, writing, and data collection, the prevalence of erroneous answers poses a significant risk. Users may unknowingly base critical decisions on faulty information, leading to errors in judgment, financial losses, or even legal ramifications.

The study’s findings are particularly pertinent in light of the evolving business models of major AI providers, such as OpenAI and Google, which are increasingly exploring advertising-based revenue streams. This shift raises concerns that information might be prioritized based on commercial interests rather than factual accuracy. If advertisers can pay for prominent placement, their potentially flawed or exaggerated content could be amplified, further exacerbating the problem of misinformation.

The traditional method of verifying information through clickable links, as was common with search engines like Google, is becoming increasingly obsolete. Many AI systems now present answers directly, often without clear citations or the ability for users to easily trace the origin of the information. This necessitates a paradigm shift in how users interact with AI, demanding a heightened level of scrutiny and critical evaluation.

Personal anecdotes from researchers and professionals further underscore the problem. In fields requiring meticulous data analysis, such as labor market trends, salaries, and financial data, AI tools have been observed to frequently estimate or make mistakes. These errors can then be compounded, leading to demonstrably illogical conclusions. One researcher shared an experience where ChatGPT, when asked to analyze capital investments in AI data centers, generated an estimate that implied there were more AI engineers than working people in the entire United States. The AI, upon being challenged with this obvious factual discrepancy, admitted its mistake and, in one instance, ceased interaction with the user.

The potential for this problem to worsen, especially with the rise of advertising-driven AI models, is a significant concern. The pursuit of revenue could incentivize the promotion of less accurate but commercially beneficial information, further eroding user trust.

Navigating the AI Landscape: Strategies for Accuracy and Trust

In response to the study’s findings, experts recommend a multi-pronged approach to mitigate the risks associated with AI-generated misinformation.

Building "Truly Trusted" Corpora

A primary recommendation is for organizations to prioritize the development and utilization of "truly trusted" AI systems. This involves curating internal datasets that are rigorously vetted for accuracy and relevance. For instance, companies like Galileo, which specialize in HR-related AI solutions, emphasize that their platforms are built entirely on proprietary research and trusted data providers, aiming to eliminate hallucinations and errors.

This principle extends to internal AI applications. Employee-facing AI tools, such as "Ask HR" bots or customer support systems, must be designed with an unwavering commitment to accuracy. This requires assigning clear ownership for content within the AI’s knowledge base and implementing regular audits to ensure policies, data, and support information remain up-to-date and correct. Organizations like IBM, with their AskHR system, demonstrate this by assigning accountable owners to each of their extensive HR policies to maintain accuracy.

Cultivating Critical Evaluation Skills

Secondly, users must actively develop and employ critical thinking skills when interacting with public AI platforms. This involves questioning, testing, and evaluating every answer received. The advice is to treat all data, whether financial, competitive, legal, or news-related, with a degree of skepticism. Users need to engage in a process of validation, cross-referencing information with reliable sources and applying their own judgment. As personal experiences suggest, a significant portion of answers to complex queries may indeed contain inaccuracies.

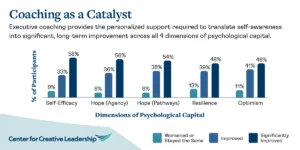

The phenomenon of "de-skilling," where AI provides answers without fostering understanding of the underlying processes, is a growing concern. As highlighted in an article in The Atlantic, relying solely on AI for "what" without understanding "how" can hinder personal and professional growth. The development of "intelligent human intuition" remains a crucial success factor, and this can only be cultivated through critical engagement with information, not passive consumption.

The Rise of Vertical AI Solutions

Finally, the study points towards a future where specialized, vertical AI solutions will likely gain prominence over generalized, open-corpus systems. AI platforms that are tailored to specific industries, such as HR (e.g., Galileo) or legal services (e.g., Harvey), are built on domain-specific, trusted datasets. This specialization allows them to offer a higher degree of accuracy and reliability, which is paramount in sectors where even minor errors can lead to severe consequences, including lawsuits or accidents.

While general-purpose AI tools like ChatGPT may appear to provide comprehensive answers, the value of absolute trust in critical applications is immense. The legal liability associated with AI-generated misinformation is still being defined, but the potential for significant repercussions is clear.

Conclusion: The Enduring Importance of Human Analysis

The BBC and EBU study serves as a critical wake-up call regarding the current limitations of AI in processing and delivering accurate news and factual information. While AI developers are expected to respond to these findings, the immediate takeaway for users is the indispensable need for vigilance and critical evaluation. The ease with which AI can generate "self-confident" answers should not be mistaken for an end to analytical work. Instead, it underscores the enduring importance of human judgment, analytical rigor, and the persistent need to hold AI providers accountable for the accuracy of their outputs. As the landscape of information continues to evolve, the skills of analysts, thinkers, and astute business professionals remain more vital than ever. The ultimate responsibility for discerning truth from falsehood in the age of AI rests with the user.

The ongoing discussion surrounding AI’s accuracy and reliability is a collaborative effort, and continuous dialogue and shared learning are essential as we navigate this transformative technology.