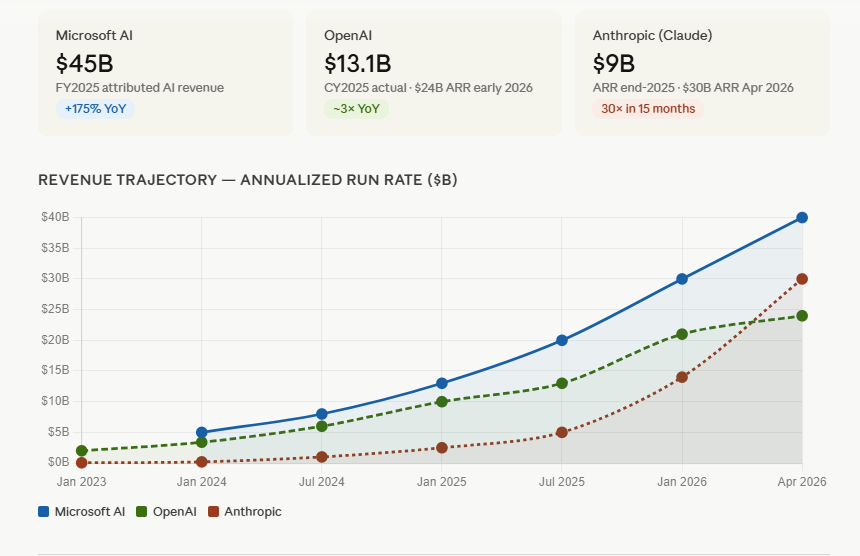

As the artificial intelligence industry braces for potential blockbuster IPOs from OpenAI and Anthropic, a significant narrative is unfolding beyond the immediate competitive dynamics of these AI pioneers. While the public and industry observers keenly anticipate the financial valuations and market strategies of these two prominent companies, a compelling case can be made that Microsoft is poised to be an even greater beneficiary of the burgeoning enterprise AI market. This analysis delves into the multifaceted landscape of enterprise AI, exploring the critical components of model development, application surfacing, and ecosystem integration, ultimately arguing for Microsoft’s unique position to capture substantial market share and value.

The AI industry is experiencing a period of intense fascination and strategic maneuvering, particularly as leading companies like OpenAI and Anthropic navigate the complex path toward public offerings. These potential IPOs, coupled with the ongoing narrative of competing leadership and technological advancements, have placed the AI sector at the forefront of global business and technological discourse. However, the intricate interplay of innovation, market adoption, and strategic partnerships suggests a broader distribution of success than a direct head-to-head competition might imply.

The enterprise market for artificial intelligence can be broadly segmented into three interconnected layers, each presenting distinct challenges and opportunities: the foundational AI models, the application "surfaces" or user experiences, and the overarching ecosystem that supports their integration and scalability.

The Foundation: Navigating the AI Model Landscape

The first and most fundamental layer is the AI model itself. The current trajectory of AI development clearly indicates that a singular, all-encompassing model is unlikely to dominate. Instead, the market is moving towards specialization, where different models are optimized for specific applications and domains.

Evolving Model Specialization:

The question of which applications will leverage which models is a central theme. For instance, coding and analytical tasks might find their optimal tools in Anthropic’s Claude, while narrative generation and document processing could lean towards OpenAI’s models. Google’s Gemini may target complex analysis and scientific applications, and technologies like Grok could find their niche in robotics and motion control. The eventual role of world models, such as those being developed by Nvidia, remains an open area of exploration.

This product-market fit is still in its nascent stages. AI research labs are continuously refining their algorithms and data training methodologies to cater to diverse needs. The realization is dawning that a one-size-fits-all approach is not viable. Model training extends far beyond mere computational power; it encompasses the meticulous collection, labeling, and refinement of data. A pharmaceutical company seeking an AI model to understand intricate protein structures and advanced genetics requires a platform specifically trained in that domain, not a generalized model.

Anthropic has recently set a notable pace in code generation, a foundational capability for many AI-driven tasks. However, the strategic focus of major players remains a subject of intense speculation. Will OpenAI pivot more aggressively into healthcare applications? Will Google dedicate significant resources to biological research AI? Which model will ultimately excel in optimizing for the physical world, encompassing robotics, manufacturing, and transportation? Nvidia and potentially Grok are contenders in these areas.

For business buyers, the implication is clear: a diverse portfolio of AI models will be necessary. Therefore, any AI provider claiming to offer an all-encompassing solution may struggle to maintain credibility in the long run. This trend is mirrored in specialized AI solutions that achieve remarkable intelligence by focusing on specific verticals. For example, an AI platform dedicated to HR, labor markets, skills, and management topics can evolve into an indispensable strategic consultant for human capital challenges, akin to the evolution of AI Galileo in the HR domain.

The Interface: Crafting the AI Application Experience

The second critical layer is the "surface" or the application experience surrounding the AI model. This layer is paramount for user adoption and practical utility. It encompasses the desktop environments, toolsets, integration capabilities, and development tools that make AI accessible and effective. These are not merely models; they are applications that require robust user interfaces, efficient memory management, personalized interactions, and seamless integration with external data sources and existing systems. This layer is often referred to as the "AI Harness."

The Primacy of User Experience:

In today’s digital landscape, user experience (UX) has become a dominant factor. The hypothetical scenario of Apple’s Siri becoming exceptionally intelligent and user-friendly could rapidly garner a billion users. The underlying model would be only one component of that success; the ease of interaction and overall user journey would be far more significant.

Microsoft’s historical dominance in the personal computer market offers a relevant parallel. The company achieved this by both licensing and innovating graphical interfaces and by relentlessly focusing on the application experience of its flagship products like Excel, PowerPoint, and Outlook, integrated within the Windows operating system. While competitors like Lotus 1-2-3 and Multiplan predated Microsoft’s suite, the eventual "fit and finish" and comprehensive integration of Microsoft 365 ultimately won over a massive user base, evidenced by its hundreds of millions of paying subscribers.

For enterprise developers and IT departments, the need for a cohesive and integrated AI experience is equally pressing. This includes:

- Intuitive Interfaces: Easy-to-use tools that require minimal training.

- Seamless Integration: Ability to connect with existing enterprise resource planning (ERP), customer relationship management (CRM), and other critical business systems.

- Developer Tooling: Robust platforms for building and customizing AI-powered applications.

- Management and Governance: Tools for IT departments to oversee AI deployment, security, and compliance.

- Personalization: AI that adapts to individual user needs and workflows.

Even if a business is impressed by the capabilities of models like Claude or Gemini, their successful adoption within an organization hinges on the availability of a comprehensive suite of complementary tools and services.

The integration of AI surfaces with established enterprise systems such as SAP, Oracle, Workday, Salesforce, ServiceNow, QuickBooks, and HubSpot is a critical determinant of their success. The failure of even a well-intentioned integration, as experienced with an attempt to use Claude with HubSpot where the system "choked" and failed to retrieve requested data, highlights the challenges inherent in the "surface" layer, not necessarily the underlying model. This failure underscores the importance of a robust "context layer" capable of accurately querying and retrieving relevant information.

The Ecosystem: Building a Network of Support and Innovation

The third vital component is the ecosystem surrounding AI platforms. Businesses require AI solutions that are not only powerful but also supported by a wide array of applications, integrations, tools, and third-party vendors.

The Network Effect in AI:

In the development of specialized AI solutions like AI Galileo, the immediate need for connectivity to diverse customer systems became apparent. Customers frequently requested integrations with their policy databases, leadership models, and compliance training platforms, necessitating the development of solutions beyond the core platform. This highlights a fundamental requirement for AI vendors in the enterprise space: fostering an ecosystem of partners who can generate revenue by building upon their platforms.

Discussions with HR and IT leaders consistently reveal a dual demand. While businesses seek readily available, packaged AI tools for immediate employee needs, they prioritize platforms that enable the creation, procurement, and management of "agentic" applications. These applications are intended to complement or replace existing enterprise systems, valued at trillions of dollars globally. Crucially, businesses are wary of vendor lock-in in such a dynamic and rapidly evolving market. Consequently, the focus shifts from the underlying AI "engine" to the "surface" – the accessible and integrated application experience.

The Surface vs. The Model: A Paradigm Shift

The discourse in the AI industry is increasingly shifting from a sole focus on "models" to an emphasis on "AI surfaces." A surface represents the application experience, distinct from the underlying Large Language Model (LLM). It is the application built on top of the AI that truly matters, and its effectiveness arises from the synergy between the surface and the model.

In the corporate environment, the "surface" is defined by the usability of the tools, their speed, the richness of historical data available, and the efficacy of the semantic connectivity layer. For instance, connecting an AI to an HR system or email platform should yield valuable, actionable insights, not just random data points. The aforementioned failure of Claude’s HubSpot integration illustrates a deficiency in the "surface" rather than the model itself.

Anthropic and OpenAI, despite their advanced models, face the challenge of building these comprehensive surfaces. Their strategy often relies on third-party developers, such as ServiceNow, Microsoft, or Accenture, to create these crucial application layers. If these integration partners deliver subpar experiences, the entire platform’s reputation can suffer, hindering adoption.

Enter Microsoft: The Strategic Powerhouse

In this evolving landscape, Microsoft appears to be strategically positioned for significant gains. While OpenAI and Anthropic are projected to generate substantial revenue, a closer examination reveals how Microsoft is capitalizing on the "surface" layer of AI.

Recent financial analyses indicate that approximately 70% of OpenAI’s projected $30 billion in revenue may stem from consumer subscriptions, while a similar portion of Anthropic’s revenue might come from selling AI compute capacity to other providers. For example, OpenAI’s revenue could be extrapolated from a substantial consumer base paying monthly fees. Anthropic’s revenue, conversely, might be derived from large enterprise clients.

Microsoft’s Revenue Generation:

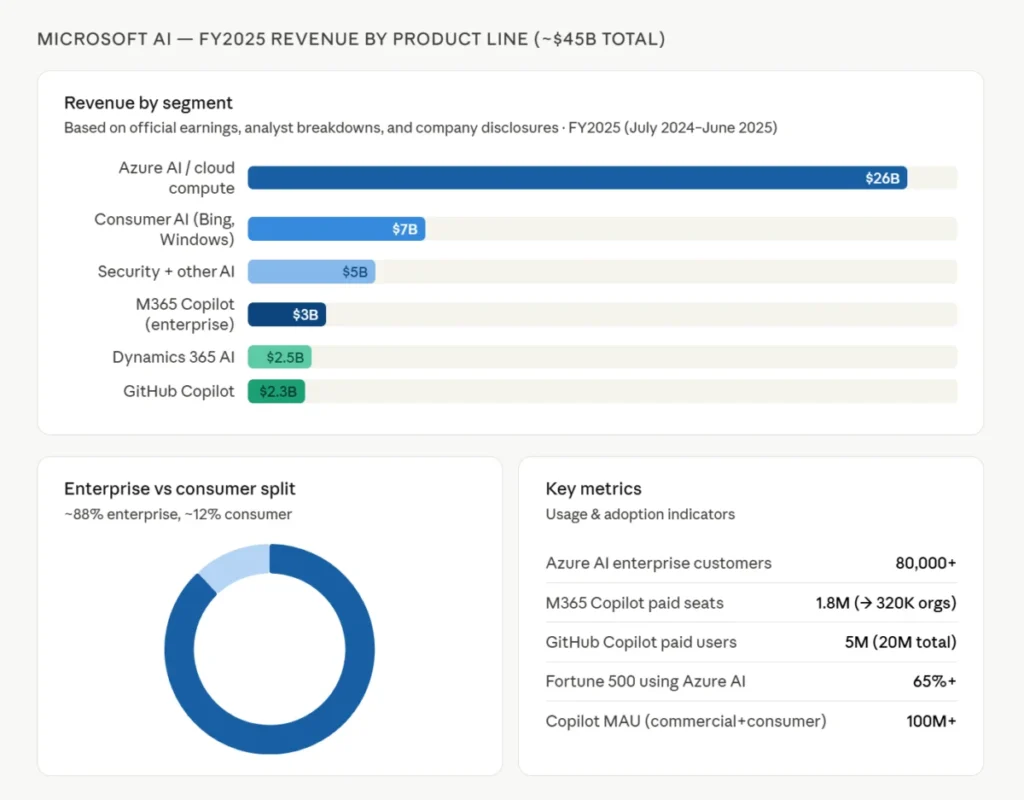

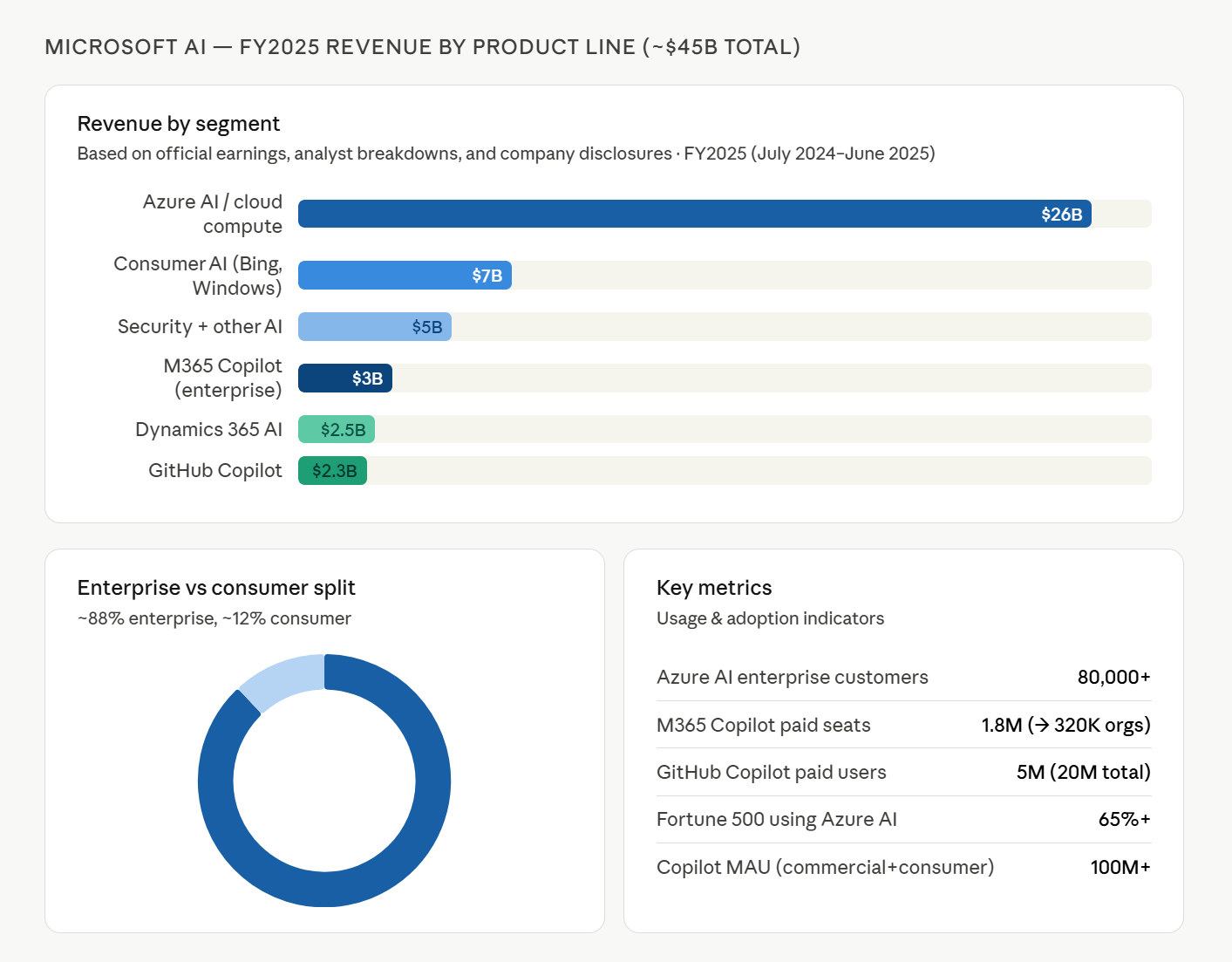

Microsoft, however, is generating significant revenue through its AI offerings, particularly in the enterprise sector. The company boasts an estimated 15 million licensed users for its Copilot service. With an average price of $25 per month, this alone generates an estimated $4.5 billion to $5 billion annually. When combined with fees from Azure API services and the broader growth of its AI-driven cloud services, Microsoft’s AI revenue is projected to exceed $25 billion, with its AI segment experiencing a robust 39% year-over-year growth.

Microsoft’s own projections anticipate over $100 billion in new AI-related revenue within the next three years, a figure some analysts believe could be achieved even faster. This aggressive growth trajectory is underpinned by strategic decisions and product evolution.

The Evolution of Microsoft Copilot: From Components to a Unified Platform

Microsoft’s approach to AI in the enterprise has undergone a significant transformation. Initially, the focus was on licensing OpenAI’s ChatGPT for Bing, a move that marked a strategic pivot from its internal AI development ambitions. This evolved into the broader Microsoft Copilot vision, which, in its early stages, resembled an intelligent version of the long-familiar "Clippy" assistant.

From Early Concepts to Integrated Solutions:

The journey of Copilot began in 2022, with a strong emphasis on the partnership with OpenAI. This quickly expanded to include specialized versions of Copilot integrated within Microsoft’s core productivity suite (M365), Dynamics, Excel, GitHub, and other applications. Microsoft’s product teams ambitiously began developing numerous "surfaces" atop ChatGPT, aiming to enhance user productivity across its vast software ecosystem.

This phase saw the introduction of Copilot Studio, Agent 365, Work IQ, and a plethora of other Copilot-powered features. Concurrently, Microsoft invested heavily in its M365 Graph Connectors to integrate corporate data into Copilot, along with fine-tuning capabilities to optimize data for intelligence. This period was characterized by rapid product development and market introduction, which, while impressive in scope, occasionally led to a perception of disjointedness.

Strategic Realignment for Cohesion:

Recognizing the potential for user confusion and the need for a more unified strategy, Microsoft’s leadership initiated a significant reorganization. Satya Nadella, CEO of Microsoft, spearheaded the consolidation of Copilot product teams into a singular product organization. This strategic move aims to streamline development and present a more coherent AI experience for both corporate and consumer users.

The leadership structure now features Jacob Andreou (ex-Snap) leading Copilot growth, alongside Ryan Roslansky (leading LinkedIn), Perry Clarke (leading Copilot Core), and Charles Lamanna (leading Agents and Apps). This unified leadership team is tasked with aligning various AI initiatives, focusing on overall agent enablement and delivering tangible corporate user value beyond mere application-specific functionalities. This organizational shift allows Microsoft to operate with a cohesive strategy, similar to how Nvidia integrates its engineering layers around a singular vision.

This consolidation effectively:

- Streamlines Product Development: A single team ensures a consistent user experience and accelerated innovation across all Copilot offerings.

- Enhances Integration: Facilitates deeper integration of AI capabilities across Microsoft’s entire product suite and with third-party applications.

- Optimizes Resource Allocation: Directs engineering and marketing efforts towards a unified strategic goal, maximizing impact and efficiency.

- Strengthens Ecosystem Play: Positions Microsoft as the central hub for AI integration, attracting developers and partners to build within its framework.

Why Microsoft Is Gaining Ground

Microsoft’s ascendancy in the enterprise AI market is driven by several key factors:

1. The Integrated Enterprise Solution:

The corporate market is actively seeking integrated toolsets that encompass desktop applications, development tools, robust IT management for AI agents, and seamless connectivity to legacy systems. While specialized players like ServiceNow and Okta operate within this ecosystem, Microsoft’s ability to build a comprehensive offering, supported by a vast partner network, is a significant advantage. The ongoing development of Work IQ, Agent365, and Copilot Studio signals a clear strategic direction toward fulfilling this demand.

2. A Developer-Centric Ecosystem:

The application development world is immense and is eagerly awaiting more integrated toolsets. Consequently, ERP, financial, productivity, analytics, and other software vendors are increasingly looking for APIs to integrate their offerings into the "Copilot-land" ecosystem. While navigating the various integration points (Teams, Graph, Work IQ, Fabric) can present challenges, the pathways for development are becoming clearer, fostering a burgeoning network of Copilot-compatible applications.

3. A Unified End-User Experience:

The end-users of PCs, along with IT support desks, can now envision a future where diverse AI applications converge seamlessly within the familiar Microsoft desktop environment. The current Copilot experience, while still evolving, is demonstrably improving. Microsoft’s commitment to investing top UI design talent in this area suggests a future where the interface, currently described as somewhat "Frankensteinish," will be significantly beautified and user-friendly.

4. Amplified Partner Network:

As Microsoft opens up its platform with APIs like those for Work IQ, its extensive partner network is poised for accelerated growth. Corporate cloud vendors, who are increasingly concerned about being superseded by AI agents, are actively seeking opportunities to integrate their services and products into the Copilot framework. This symbiotic relationship strengthens Microsoft’s market position and expands the utility of its AI offerings.

Microsoft’s Added Value Proposition

Microsoft’s platform offers several crucial value-adds that differentiate it in the competitive AI landscape:

- Deep Research Capabilities: Features like the "Researcher" button, leveraging the Microsoft Graph, enable in-depth analysis of user data (calendars, documents, etc.) to provide advice and context. As this capability is enhanced with memory and context, it promises to deliver significant value to individuals and leaders.

- Intelligent Routing and Optimization: New Microsoft Agents allow users to compare queries across different AI models, optimizing for cost and performance before execution. Over time, these agents will be capable of decomposing complex AI tasks and distributing them to the most appropriate models or agents.

- Agentic Interaction with Core Applications: The new Copilot empowers users to interact with complex documents, spreadsheets, and presentations in a dynamic, agent-driven manner. Users can ask questions, modify data, run reports, and create visualizations directly within Copilot, with changes reflected in the underlying Microsoft applications. This extends the "in-app" Copilot experience across the entire Microsoft suite and is expected to become increasingly sophisticated.

- Intelligent Context Layer in Work IQ: The upcoming release of Work IQ APIs will enable companies to import and build custom "context" into Copilot. This transforms Copilot into a powerful engine for agentic HR, finance, sales, and other specialized business functions, deeply integrating AI with core enterprise operations.

The integration of solutions like Galileo, through the Graph connector and fine-tuning capabilities, allows employees to access specialized AI expertise within their familiar Microsoft environment. This expanded API access enables the development of even more use cases for Galileo, solidifying its position as a leading management and HR advisory tool for all employees.

As the AI industry continues its rapid evolution, the strategic positioning and comprehensive ecosystem approach of Microsoft suggest that while OpenAI and Anthropic may capture significant attention and value, Microsoft’s deep integration into the enterprise fabric positions it as a potentially dominant force in the long-term AI landscape. The focus on the "surface" and the "ecosystem," supported by robust underlying models, appears to be the winning formula for widespread enterprise adoption and sustained market leadership.