In an era defined by rapid technological advancement and the pervasive integration of artificial intelligence (AI), the fundamental principles of effective leadership are undergoing a profound re-evaluation. While AI promises unprecedented gains in efficiency and data analysis, it simultaneously highlights the enduring, and indeed, increasing importance of uniquely human leadership qualities. This shift necessitates a critical examination of how leaders can harness AI’s capabilities without relinquishing their core responsibilities in areas of moral judgment, accountability, and strategic vision.

At the heart of this evolving landscape lies the question of delegation. Lolly Daskal, a renowned executive leadership coach and founder of Lead From Within, asserts that certain decisions must unequivocally remain within the human domain. "Anything involving moral judgment, accountability, or long-term identity must stay human," Daskal states. "AI can model outcomes, but it can’t carry responsibility or context across time." This fundamental distinction underscores the inherent limitations of AI in navigating the complex ethical and existential dimensions of leadership. While AI can process vast datasets and identify patterns with remarkable speed, it lacks the lived experience, emotional intelligence, and moral compass necessary to make decisions that carry significant human consequences.

The increasing sophistication of AI, capable of processing more data and identifying patterns that might elude human perception, presents a unique challenge for leaders. Daskal proposes that the answer lies not in attempting to outpace AI’s analytical prowess, but in elevating the quality of human inquiry. "You lead by asking better questions," she advises. "AI can reveal patterns, but it can’t assign meaning or set direction. That’s your role: interpret, decide, and take responsibility." This perspective suggests a symbiotic relationship where AI serves as a powerful analytical tool, augmenting human cognitive abilities rather than supplanting them. Leaders must cultivate the ability to translate AI-generated insights into actionable strategies, imbuing them with purpose and aligning them with organizational values.

Trust, a cornerstone of effective leadership, is also directly impacted by the integration of AI. Daskal emphasizes the critical need for transparency. "Only if they stay transparent about how AI is being used," she explains, when asked if a leader can rely on AI and still be trusted. "Trust breaks down when decisions feel outsourced or opaque. Leaders must keep the human layer visible." This highlights a growing concern among employees and stakeholders regarding the potential for AI to create a sense of detachment or even manipulation. Organizations that embrace AI without clear communication about its role and limitations risk eroding the trust essential for a cohesive and productive work environment. Research from institutions like the Edelman Trust Barometer has consistently shown that transparency and ethical conduct are paramount for maintaining public trust, a principle that extends directly to the internal dynamics of organizations leveraging AI.

The Risks of Unchecked AI Adoption

The allure of AI-driven efficiency can lead to a dangerous pitfall: speed without reflection. Daskal identifies this as "the biggest leadership risk in adopting AI." She elaborates, "Many leaders rush to implement AI tools without asking what values or trade-offs they’re embedding. That’s not strategy—it’s abdication." This uncritical adoption can inadvertently embed biases, perpetuate inequities, or lead to decisions that are detrimental in the long term, even if they appear optimal in the short term based on data alone. The implications of such haste can be far-reaching, impacting everything from hiring practices to product development and customer relations.

The accelerated pace of AI implementation can also serve as an unforgiving spotlight on pre-existing leadership weaknesses. "It removes the noise," Daskal observes, regarding how AI exposes weak leadership. "With AI handling routine work, what’s left is pure judgment, vision, and ethics. If a leader lacks those, the gap shows fast." As AI automates more mundane and repetitive tasks, the true test of leadership will lie in the ability to provide strategic direction, foster innovation, and cultivate a strong ethical framework. This necessitates leaders who possess not only technical literacy but also profound emotional intelligence and a robust moral compass.

AI’s Impact on Business Strategy and Operations

The competitive landscape of business is being fundamentally reshaped by AI. Historically, access to data and advanced automation were key differentiators. Today, however, these are becoming table stakes. "Data and automation used to be differentiators. Now they’re baseline," Daskal states. "The edge comes from how wisely leaders integrate AI with human judgment." This means that organizations can no longer rely solely on technological superiority to gain an advantage. Instead, the focus shifts to the strategic integration of AI with human ingenuity, creativity, and critical thinking. Companies that can effectively blend AI’s analytical power with human intuition and foresight will be best positioned to thrive.

Certain business functions are particularly susceptible to the perils of AI overuse. Daskal warns, "Anything involving people: HR, marketing, decision-making. Over-automation here leads to tone-deaf culture, generic messaging, and poor moral choices." The human element is crucial in these areas. HR requires empathy and nuanced understanding of individual circumstances. Marketing thrives on authentic connection and cultural relevance. Decision-making, especially at strategic levels, demands ethical consideration and an understanding of complex human dynamics. Over-reliance on AI in these domains risks creating sterile, impersonal, and potentially harmful organizational environments.

While AI undeniably enhances execution by streamlining processes and providing data-driven insights, its role in strategy is more nuanced. "AI enhances execution first, but it also surfaces insights that can inform strategy," Daskal notes. "The risk is leaders mistaking correlation for causation and skipping critical thinking." The danger lies in allowing AI-generated correlations to dictate strategic direction without rigorous human analysis and validation. True strategic leadership involves synthesizing AI-driven insights with market understanding, ethical considerations, and long-term vision.

The CEO’s Role in an AI-Driven World

The imperative for leaders to engage directly with AI is growing. Daskal advocates for firsthand experience: "Yes. Leaders who don’t engage firsthand lose perspective. You can’t evaluate tools or challenge outputs if you’re relying on secondhand summaries." This hands-on approach allows leaders to develop a deeper understanding of AI’s capabilities and limitations, enabling them to make more informed decisions about its implementation and to effectively challenge its outputs when necessary. Without this direct engagement, leaders risk becoming disconnected from the very tools shaping their organizations.

Accountability for AI-driven decisions extends to the highest levels of governance. Daskal advises that boards should hold leaders accountable by "asking who made the final call, what risks were considered, and what human oversight was involved. Delegating to AI doesn’t remove human accountability." This underscores the principle that ultimate responsibility for any decision, regardless of the tools used, rests with human leaders. Boards and regulatory bodies are increasingly scrutinizing the ethical implications and accountability frameworks surrounding AI deployment, making it imperative for leaders to establish clear lines of responsibility.

Evolving Team Dynamics and Leadership Demands

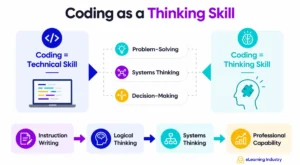

The integration of AI into the workplace fundamentally alters the expectations teams have of their leaders. "Teams need more interpretation, not just instruction," Daskal observes. "They want leaders who can translate what AI says into what matters, and protect what shouldn’t be automated." This shift requires leaders to act as translators and guardians, ensuring that AI serves human goals and values. They must be adept at communicating the rationale behind AI-driven changes, fostering understanding, and safeguarding the aspects of work that contribute to human connection and fulfillment.

A significant risk emerges when teams blindly follow AI without critical evaluation. "They lose critical thinking," Daskal warns. "Over time, the team gets faster but less thoughtful. Leaders must model how to pause, challenge, and reflect." This over-reliance can lead to a decline in problem-solving skills and a diminished capacity for independent thought. Leaders have a responsibility to cultivate an environment where critical inquiry is encouraged, and AI is viewed as a tool to augment, not replace, human judgment.

As AI takes on more tasks, the nature of collaboration within teams must evolve. Daskal suggests a shift in focus: "By shifting the focus from task to meaning. AI can do the work, but humans need to connect, debate, and align on why the work matters." This emphasizes the enduring human need for connection, shared purpose, and collaborative dialogue. Leaders must create opportunities for teams to engage in meaningful discussions, fostering a sense of shared purpose that transcends the transactional nature of AI-assisted tasks.

The ethical considerations of using AI for team performance monitoring are also paramount. Daskal states, "Only if it’s transparent and used for growth, not punishment. Surveillance breaks trust. Insight builds it—if it’s shared and co-owned." The use of AI for performance tracking can easily devolve into intrusive surveillance, undermining morale and trust. Ethical implementation requires transparency, a focus on development and support, and shared ownership of the insights generated.

Leading teams that exhibit resistance to AI tools requires a nuanced approach. Daskal advises against simply promoting the technology: "Don’t sell the tool. Clarify the value. Show how AI supports their thinking, not replaces it. Resistance often comes from fear of being made irrelevant." Addressing the underlying fears and demonstrating how AI can empower rather than displace individuals is key to overcoming resistance and fostering adoption.

Staying Informed and Cultivating Discernment

In the rapidly evolving field of AI, leaders must remain informed without succumbing to information overload. Daskal’s advice is practical: "By choosing a few trusted sources and setting regular time to review. The goal isn’t to know everything. It’s to stay literate enough to ask the right questions." This approach emphasizes the importance of curated learning and the ability to ask insightful questions rather than possessing encyclopedic knowledge.

The fundamental question of AI’s ability to understand human context remains a critical differentiator. "No," Daskal asserts, when asked if AI can fully understand human context. "It can analyze patterns in language and behavior, but it lacks lived experience, emotion, and moral perspective. That gap is where human leadership remains essential." This inherent limitation of AI underscores why human leaders are indispensable in navigating the complexities of organizational life.

The risk of over-reliance on AI-generated insights is the potential for mistaking correlation for truth. "AI can surface possibilities, but leaders must test for relevance, integrity, and long-term impact," Daskal cautions. This highlights the need for leaders to maintain a healthy skepticism and apply critical thinking to AI-generated information, ensuring it aligns with broader organizational objectives and ethical principles.

The Enduring Value of Human Leadership

Ultimately, the rise of AI serves to clarify, rather than diminish, the definition of true leadership. Daskal posits, "It’s clarified it. Leadership isn’t about being the smartest in the room anymore. It’s about being the clearest, most responsible, and most human." In an era of technological acceleration, teams will increasingly seek out leaders who can provide clarity, ethical guidance, and genuine human connection.

Traditional leadership models, if they are to remain relevant, must adapt. Hierarchies built on control are ill-suited for environments that demand agility and innovation. "Only if they evolve," Daskal states, regarding the usefulness of traditional models. "Hierarchies built for control don’t work in an environment that rewards adaptability, transparency, and speed." Future leaders will be measured by their capacity to navigate complexity, uphold ethical standards, and guide their teams through uncertainty, often in collaboration with AI.

The most overlooked leadership trait in the current landscape, according to Daskal, is discernment. "Not just knowing what AI can do, but knowing what it shouldn’t do—and having the courage to draw that line." This ability to exercise sound judgment and make principled decisions about the application of technology is crucial for responsible leadership in the AI era.

In conclusion, as artificial intelligence continues its transformative journey, the role of human leaders becomes not obsolete, but more critical than ever. By embracing AI as a powerful tool while steadfastly upholding uniquely human qualities such as moral judgment, empathy, strategic vision, and ethical discernment, leaders can navigate this new era with confidence, guiding their organizations toward a future that is both technologically advanced and profoundly human.