The notion that Responsible Artificial Intelligence (AI) concludes with a model’s launch is a fundamental misunderstanding of its very essence; rather, it is the ongoing commitment to its responsible application that truly defines its success. This isn’t a minor detail or an afterthought; it is the core principle. AI models, initially performing equitably, can and often do drift over time. The real-world data distributions they encounter in production can diverge significantly from the controlled conditions of their training. New use cases frequently surface edge cases that the original evaluation might not have anticipated. Without active, vigilant monitoring, these critical shifts can go unnoticed, leading to negative consequences only after a problem has already materialized. This evolving reality is increasingly being reflected in the regulatory landscape, signaling a growing demand for proactive and continuous AI governance.

The U.S. Equal Employment Opportunity Commission’s (EEOC) AI and Algorithmic Fairness Initiative, for instance, serves as a clear warning to employers that AI-powered hiring tools can indeed trigger civil rights liability if they result in disparate outcomes. This initiative underscores a critical shift in how algorithmic decision-making in employment is viewed, moving beyond mere technical performance to encompass its societal and legal ramifications. Complementing this federal guidance, New York City’s Automated Employment Decision Tools law mandates that any employer utilizing AI-assisted hiring must conduct an independent bias audit before deploying such tools and must repeat this audit annually. These legislative and regulatory actions are not the primary drivers for companies prioritizing robust AI governance frameworks, but rather serve as powerful confirmations that the broader field is indeed aligning with the foundational principles of responsible AI practices that have long been advocated by leading organizations. For companies like Eightfold, whose Responsible AI framework includes governance as its fourth pillar, these developments validate the necessity of establishing an ongoing infrastructure designed to ensure that commitments to fairness do not erode once a model is put into operation.

The Critical Gap: From Model Evaluation to Real-World Outcomes

AI model evaluation frameworks, which typically focus on metrics designed to assess performance across various demographic subgroups, answer a crucial question: does the AI system, in this case, the Talent Intelligence Platform, perform consistently across different groups at the point of initial assessment? While this is an indispensable part of responsible AI development, it is not the entirety of the challenge. A model can exhibit statistically equivalent performance across demographic groups and still lead to unequal real-world outcomes if the underlying training data is itself imbued with historical biases.

For example, if a training dataset reflects historical employment patterns where women have been systematically underrepresented in roles deemed "successful hires" due to past societal or systemic barriers, an AI model trained on this data will inevitably learn and replicate these patterns. Even if the model itself demonstrates no differential performance by gender in its internal evaluations, its recommendations will likely perpetuate those historical inequities. This highlights the necessity of moving beyond internal model performance metrics to assess the practical impact of AI deployment.

This is where adverse impact analysis becomes critically important. This analytical approach directly addresses the complementary question: does the use of this AI tool result in disparate outcomes across groups in practice? This is a concept that employment law has applied to human hiring decisions for decades, and it provides a robust framework for evaluating AI-assisted hiring tools. By applying these established legal principles to AI, organizations can proactively identify and mitigate potential discriminatory effects.

Adverse Impact Analysis: Bridging AI and Employment Law

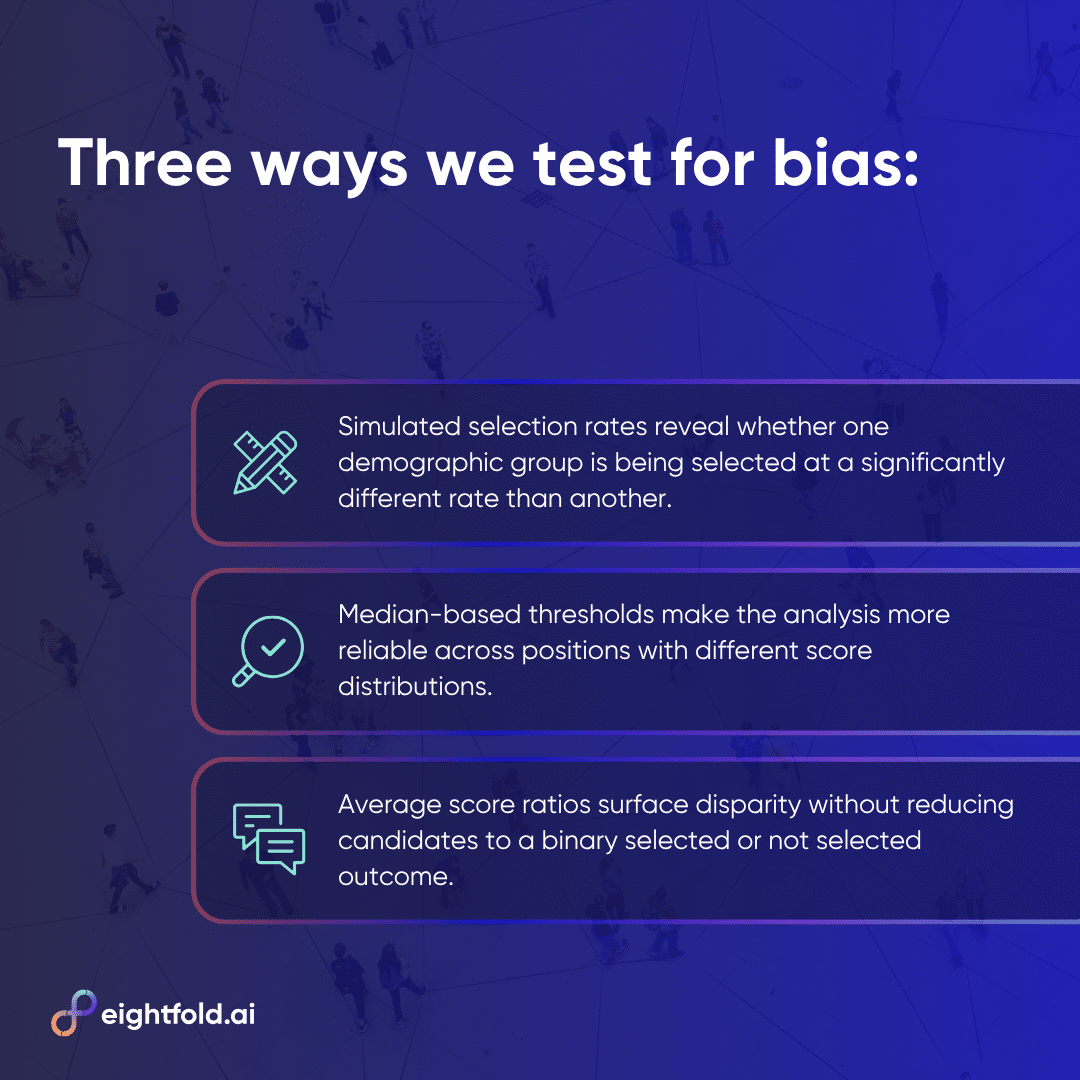

Adverse impact analysis scrutinizes the selection rates across different demographic subgroups. Its fundamental question is whether the observed differences in selection rates are substantial enough to suggest discrimination, whether intentional or unintentional. To provide a comprehensive understanding, Eightfold employs three complementary approaches, each tailored to specific data conditions and analytical needs.

These approaches are designed to surface different facets of potential disparity. Each method, while valuable, possesses inherent limitations that make it insufficient when used in isolation. A multi-faceted approach is therefore essential for a thorough assessment.

The Nuances of Statistical Testing: Why No Single Metric Suffices

The history of adverse impact analysis within employment law has yielded a rich body of research on the strengths and weaknesses of various statistical tests. This scholarly work holds direct relevance for AI systems operating at scale, particularly in the high-stakes domain of talent acquisition.

-

The Z-test: This test is primarily used to determine whether differences in selection rates between subgroups are statistically significant. It performs well with moderate sample sizes. However, when faced with extremely large sample sizes, such as those routinely encountered by platforms like the Talent Intelligence Platform processing millions of applications, the Z-test can become an unreliable indicator of meaningful bias. A seemingly minor difference of just 1% in selection rates can achieve high statistical significance at this scale, even if that difference has no practical implication for the candidates involved.

-

The 4/5ths Rule: To address the limitations of statistical significance at large scales, the 4/5ths Rule focuses on practical significance, independent of sample size. A selection rate ratio below 0.8 or above 1.25 is generally considered indicative of potentially significant adverse impact, irrespective of statistical significance. This scale-independence makes it particularly valuable for analyzing large datasets. Conversely, at very small sample sizes, a single additional selection can dramatically alter the outcome, rendering the rule unreliable without supplementary safeguards. The "flip-flop" test, which assesses whether the result changes if one selection is moved from the advantaged to the disadvantaged group, is one such safeguard.

-

Fisher’s Exact Test: This test is the preferred method when sample sizes are small and the statistical assumptions underlying the Z-test are not met. It precisely calculates the probability of observing a particular selection pattern under the null hypothesis of no discrimination, without relying on approximations. The primary limitation of Fisher’s Exact Test is computational complexity. For very large sample sizes, the factorial calculations involved can become prohibitively resource-intensive.

Eightfold’s adverse impact analysis framework strategically applies all three of these approaches, leveraging each where its reliability is highest. This means statistical significance tests are employed for moderate sample sizes, the 4/5ths Rule is utilized for large-scale data analysis, and Fisher’s Exact Test is reserved for small sample scenarios. The overarching objective is to construct a comprehensive picture of potential disparities that no single statistical test could provide on its own.

Perturbation Testing: Ensuring Fairness at the Individual Level

While adverse impact analysis offers a valuable population-level perspective by examining the equitable distribution of outcomes across all candidates scored for a given position, perturbation testing provides a crucial individual-level view. This method investigates whether a candidate’s score changes significantly if details implying a different demographic group are substituted on their resume.

In this process, pairs of resumes are created: an original and a modified version. The modification involves altering signals, such as names, which can imply gender or ethnicity, to suggest a candidate from a different demographic group. The match scores generated by the AI for both the original and modified resumes are then compared using an independent samples t-test.

The expectation for a fair AI system is that the match scores for the original and modified resumes should be statistically indistinguishable. The candidate’s qualifications, skills, experience, and overall fit for the role remain unchanged. If the scores diverge significantly, it indicates that the model is erroneously treating demographic signals as relevant features, which is a clear violation of fair AI principles.

A low t-score and a high p-value resulting from perturbation tests signify that the match scores are not statistically sensitive to the demographic signals embedded within names. This represents one of the most direct methods for detecting bias that may have infiltrated model scoring at the individual level. It directly aligns with the fundamental standard that every candidate deserves to be evaluated based on their capabilities, not their perceived identity.

External Audits: Accountability Beyond Internal Scrutiny

While internal testing, no matter how rigorous, is an essential component of responsible AI development, it inherently possesses limitations as an accountability mechanism. The very team responsible for building an AI system is not always in the optimal position to objectively assess its fairness. Internal incentives, shared assumptions, and deep familiarity with the system’s intricacies can inadvertently create blind spots.

External bias audits are designed to counteract these limitations by introducing an independent perspective into the evaluation process. Third-party auditors meticulously examine AI platforms, such as the Talent Intelligence Platform, against established, objective fairness standards. They then provide their findings to stakeholders and customers, creating a public record of accountability. For customers operating in jurisdictions like New York City, which mandate regular bias audits, this also ensures compliance with legal requirements.

Beyond mere compliance, external audits serve a vital trust function. Candidates and customers interacting with AI-assisted hiring systems cannot independently verify their fairness. An independent audit, conducted by credentialed experts following a clearly defined methodology, offers a level of objective assurance that internal claims of fairness, while potentially sincere, cannot fully substitute for. This is a key reason why the Talent Intelligence Platform holds certifications such as FedRAMP Moderate and ISO 42001, standards that general-purpose AI tools often cannot meet.

Continuous Monitoring: Sustaining Fairness Commitments Post-Launch

The final, and arguably most critical, component of a comprehensive governance framework is continuous monitoring. This involves establishing the necessary infrastructure to ensure that the fairness commitments made at the time of launch are actively maintained over the system’s lifecycle.

Key performance indicators, such as latency and accuracy metrics, are tracked on live dashboards and regularly reviewed by the engineering team. Automated alarms are configured to trigger when these metrics cross predetermined thresholds, prompting immediate investigation and corrective action. This approach treats model drift, including fairness drift, as an operational concern that requires ongoing attention, rather than a periodic review item.

Furthermore, organizations are maintaining continuously growing "golden datasets." These curated datasets, often refined through a human-in-the-loop process, serve as benchmarks. AI models in production are periodically evaluated against these golden datasets to detect performance changes that might not be apparent in aggregate metrics.

A specific standard for monitoring involves tracking the probability distributions of match scores across various positions. These distributions should maintain a stable trend over time. Any significant deviation or drift in these distributions serves as an early warning sign that the model’s behavior has changed in ways that could impact fairness.

The synergy of automated monitoring, regular human oversight, and structured golden dataset evaluations creates multiple overlapping detection mechanisms. This layered approach ensures that potential issues are identified early, before they have the opportunity to compound and lead to significant adverse real-world impacts.

Fairness as the Bedrock of AI Implementation

The four pillars discussed in this series – the right products, the right data, the right algorithms, and the right governance – collectively represent a singular, unwavering commitment: that every candidate deserves an evaluation of equal quality, measured against the same standard, and held to the same bar. This principle applies not only to candidates who apply early in the recruitment process or those belonging to the largest demographic groups, but to every single candidate.

AI fairness is not a static achievement; it is a dynamic process that requires continuous effort and adaptation. The regulatory landscape is in constant flux, research in AI fairness is rapidly evolving, and the real-world data distributions that AI models encounter are perpetually changing. An approach to responsible AI that fails to evolve alongside these external factors will inevitably lead to systems that become progressively less fair over time, even in the absence of any intentional decisions to alter their behavior.

For HR leaders and talent acquisition professionals tasked with evaluating AI tools, this comprehensive framework offers a vital set of questions to consider. Beyond the initial inquiry of "what measures were taken before launch?", critical follow-up questions include: "What mechanisms are in place after launch to ensure ongoing fairness?" "Does the model demonstrate equal accuracy, or are outcomes equitable in practice?" "Has the system undergone audits, and if so, how frequently, by whom, and using what methodology?"

The answers to these probing questions distinguish AI systems built with genuine accountability from those that merely treat fairness as a superficial checkbox. It is about embedding fairness not as an add-on feature, but as the fundamental foundation upon which AI in talent acquisition is built.

For those seeking to deepen their understanding of responsible AI implementation and the practical steps involved in building and maintaining fair AI systems, further resources are available. Learning more about Eightfold’s commitment to responsible AI, including the details of their bias audit results, can provide valuable insights. This commitment to transparency and continuous improvement is essential in navigating the complex landscape of AI in the modern workplace, ensuring that technology serves to enhance equity rather than perpetuate inequality.