Responsible AI is not a destination; it is an ongoing journey. The principle that responsible AI does not end at launch is not a mere caveat, but the fundamental point driving the evolution of ethical artificial intelligence practices, especially within the critical domain of employment. A model that exhibits equitable performance at its inception can, and often does, drift over time. This drift is an inevitable consequence of the dynamic real world. Data distributions encountered in production environments frequently diverge from the controlled conditions of training. New use cases emerge, revealing edge cases that the original evaluation models did not anticipate. Without proactive and vigilant monitoring, these deviations remain invisible until a problem has already manifested, potentially leading to significant negative consequences.

This evolving reality is increasingly being recognized and codified within the regulatory landscape. The U.S. Equal Employment Opportunity Commission (EEOC) has launched its AI and Algorithmic Fairness Initiative, explicitly putting employers on notice that AI-powered hiring tools can incur civil rights liability if they produce disparate outcomes across protected groups. Similarly, New York City’s pioneering Automated Employment Decision Tools law mandates that any employer utilizing AI-assisted hiring must conduct an independent bias audit prior to deployment and repeat this audit annually. These regulatory actions are not the genesis of responsible AI development; rather, they serve as powerful affirmations that the broader industry is catching up to the established best practices that responsible AI has always necessitated. For organizations like Eightfold, which has long championed a comprehensive approach to AI ethics, these developments underscore the critical importance of their established governance framework, which constitutes the fourth pillar of their responsible AI strategy. This pillar focuses on the ongoing infrastructure designed to ensure that commitments to fairness do not degrade after a model has been deployed.

The Gap Between Model Evaluation and Real-World Outcomes

The initial evaluation of AI models, often through sophisticated metrics, addresses a crucial question: does the AI system, in this case, the Eightfold Talent Intelligence Platform, perform consistently across various demographic subgroups? While this is an essential benchmark, it is not the sole determinant of true fairness. A model can demonstrate equal performance across different groups in its initial testing phase and still contribute to unequal real-world outcomes if the underlying data it learned from is inherently biased.

Consider a scenario where historical hiring data reveals a systemic underrepresentation of women among "successful hires" due to entrenched societal barriers. A model trained on such data will inevitably learn and perpetuate these historical patterns, generating recommendations that reflect past inequities, even if the model itself shows no statistically significant performance difference by gender during its evaluation. This highlights a critical distinction: while model evaluation assesses internal consistency, it doesn’t always capture the broader societal impact.

Adverse impact analysis emerges as a vital complement to these initial evaluations. This form of analysis directly addresses the question: does the utilization of this AI tool result in disparate outcomes across different groups in practice? This is a framework that employment law has historically applied to human hiring decisions for decades, and it is the same principle that Eightfold applies to its AI-assisted hiring tools. It shifts the focus from the model’s internal workings to its external consequences.

Adverse Impact Analysis: Bridging AI and Employment Law

Adverse impact analysis meticulously examines selection rates across demographic subgroups. Its core function is to determine whether any observed differences in these rates are substantial enough to suggest discrimination, whether intentional or unintentional. This analysis is critical for understanding the real-world implications of AI in hiring.

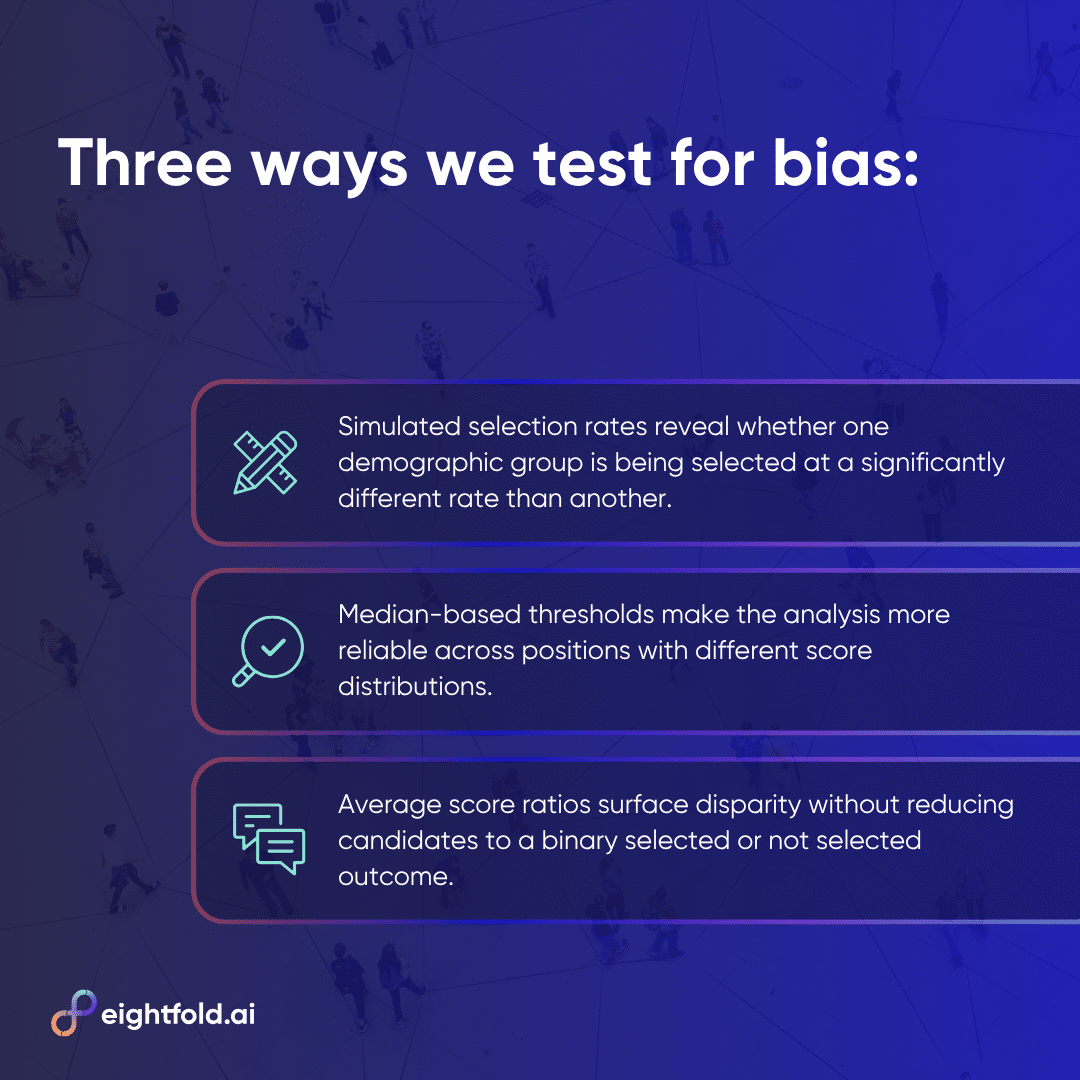

Eightfold employs a trio of complementary analytical approaches, each tailored to specific data conditions and offering unique insights into potential disparities:

-

Statistical Significance Tests (e.g., Z-test): These tests assess whether differences in selection rates between subgroups are statistically significant. They are particularly effective for datasets of moderate size. However, in scenarios involving exceptionally large sample sizes, such as those routinely encountered by the Talent Intelligence Platform, a statistically significant difference might be minuscule in practical terms. A mere 1% variation in selection rates, when applied to millions of applications, can be deemed statistically significant, yet hold no meaningful practical consequence for individual candidates.

-

The 4/5ths Rule (or 80% Rule): This rule addresses the limitation of statistical significance by measuring practical significance independently of sample size. A selection rate ratio below 0.8 or above 1.25 is generally considered indicative of potentially significant adverse impact, irrespective of statistical significance. This scale independence makes it invaluable for large-scale data analysis. Conversely, at very small sample sizes, even a single additional selection can drastically alter the outcome, rendering it unreliable without supplementary safeguards, such as the "flip-flop" test. This test verifies if the result changes when a single selection is moved from an advantaged to a disadvantaged group.

-

Fisher’s Exact Test: This statistical test is the preferred tool when dealing with small sample sizes where the statistical assumptions of the Z-test may not hold. It calculates the precise probability of observing a given selection pattern under the null hypothesis of no discrimination, without relying on approximations. Its primary limitation is computational; for very large datasets, the factorial calculations involved can become prohibitively resource-intensive.

Eightfold’s adverse impact analysis framework strategically deploys all three approaches, applying each where it is most reliable. This integrated approach ensures a comprehensive understanding of potential disparities, acknowledging that no single statistical test can provide a complete picture.

Perturbation Testing: Fairness at the Individual Level

While adverse impact analysis offers a valuable population-level perspective, assessing fairness at the individual level is equally crucial. Perturbation testing provides this granular view. It addresses the question: for a specific candidate, does their score change significantly if details on their resume that imply a different demographic group are substituted?

In perturbation testing, Eightfold systematically creates pairs of resumes. An original resume is modified to change signals like names, which can often imply gender or ethnicity, to suggest a candidate from a different demographic group. The match scores generated by the AI for both the original and the modified resumes are then compared using an independent samples t-test.

The expectation for a fair AI system is that the match scores generated for both versions of the resume should be statistically indistinguishable. The underlying qualifications, skills, experience, and overall fit for the role remain identical. If the scores diverge significantly, it is a strong indicator that the model is inadvertently treating demographic signals as relevant features, which is a direct violation of fairness principles. A low t-score and a high p-value on perturbation tests signify that match scores are not statistically sensitive to the demographic signals embedded in names. This represents one of the most direct methods for detecting bias that may have crept into model scoring at the individual level, affirming the fundamental right of every candidate to be evaluated based on their capabilities, not their identity.

External Audits: Accountability Beyond Internal Testing

While internal testing, however rigorous, is an indispensable part of the AI development lifecycle, it inherently possesses limitations as an accountability mechanism. The same team responsible for building a system may not be in the optimal position to objectively assess its fairness. Internal incentives, shared assumptions, and a deep familiarity with the system can inadvertently create blind spots.

External bias audits are designed to address this by introducing an independent perspective into the evaluation process. Credentialed third-party auditors examine the Eightfold Talent Intelligence Platform against objective fairness standards. They provide their findings to stakeholders and customers, thereby establishing a public record of accountability. For organizations operating in jurisdictions like New York City, which mandate bias audits, this process also ensures compliance with applicable legal requirements.

Beyond mere compliance, external audits serve a vital trust function. Candidates and customers interacting with AI-assisted hiring systems are often unable to evaluate these complex technologies themselves. An independent audit, conducted by recognized experts using a clearly defined methodology, offers a level of objective assurance that internal claims of fairness alone cannot provide. This commitment to external validation is a key reason why the Talent Intelligence Platform holds certifications like FedRAMP Moderate and ISO 42001, standards that general-purpose AI tools typically cannot meet.

Active Monitoring: Sustaining Fairness Commitments Post-Launch

The final, and arguably most critical, component of Eightfold’s governance framework is continuous monitoring. This infrastructure is designed to ensure that the fairness commitments made at the time of launch are not only maintained but actively upheld over time.

Key metrics related to latency and accuracy are tracked on live dashboards, with regular oversight from the engineering team. Automated alarms are configured to trigger when these metrics cross predetermined thresholds, prompting immediate investigation and corrective action. This approach treats model drift, including fairness drift, as an operational concern requiring proactive management, rather than an issue to be addressed only during infrequent annual reviews.

Furthermore, Eightfold maintains continuously growing "golden datasets." These datasets are meticulously curated through a human-in-the-loop process, ensuring their accuracy and relevance. AI models in production are regularly evaluated against these golden datasets to detect any performance changes that might not be apparent in aggregate metrics.

A specific standard rigorously monitored is the stability of match score probability distributions across different positions over time. A deviation in this distribution serves as an early warning sign that the model’s behavior may have changed in ways that could impact fairness. The integration of automated monitoring, regular human review, and structured golden dataset evaluations creates a multi-layered detection system. This comprehensive approach ensures that issues are identified early, before they have the opportunity to compound and result in significant real-world negative impacts.

Fairness as the Foundational Principle

The four pillars discussed in this series – right products, right data, right algorithms, and right governance – represent a singular, unwavering commitment: that every candidate deserves an evaluation of equal quality, adhering to the same high standard, and meeting the same rigorous bar. This commitment extends to all candidates, not just those who apply early in a recruitment cycle or those belonging to the largest demographic groups. Every single candidate is included.

AI fairness is not a static achievement; it is a dynamic process that requires continuous maintenance and adaptation. The regulatory landscape is in constant flux, research in AI ethics is rapidly evolving, and the data distributions that AI models encounter in the real world are perpetually changing. An approach to responsible AI that does not evolve in tandem with these shifts will inevitably lead to systems that become progressively less fair over time, even in the absence of any intentional design changes.

For HR leaders and talent acquisition professionals tasked with evaluating AI tools, this comprehensive framework offers a guide to the critical questions they should be asking. The focus should extend beyond "What did you do before launch?" to "What processes are in place after launch?" The inquiry should move from "Does your model demonstrate equal accuracy?" to "Do the real-world outcomes appear equitable?" And beyond "Have you been audited?" to "How frequently, by whom, and with what specific methodology?"

The answers to these probing questions are what differentiate AI systems built with genuine accountability from those that merely treat fairness as a superficial checkbox. The ultimate goal is not fairness as an add-on feature, but fairness as the unshakeable foundation upon which all AI systems are built and operated.