A comprehensive new report, "2026 AI Adoption in the Enterprise," jointly produced by AI platform Writer and Workplace Intelligence, has unveiled a significant disconnect between how C-suite executives and employees perceive the integration of artificial intelligence in the workplace. The survey, which polled 1,200 C-suite executives and 1,200 employees across the United States, United Kingdom, Ireland, Benelux, France, and Germany, highlights five prevalent "AI adoption myths" that could pose considerable challenges for Human Resources departments striving to implement AI strategies effectively. These findings suggest that while leadership may believe AI is smoothly integrating, the ground reality for many employees points to apprehension, resistance, and a lack of adequate support.

The study, released in early 2026, comes at a critical juncture as organizations globally accelerate their AI adoption efforts. Businesses are increasingly viewing AI not just as a tool for efficiency but as a strategic imperative for competitiveness and innovation. This rapid push, however, appears to be outpacing clear communication and robust support structures for the workforce. The report’s findings suggest that many HR leaders may be operating under assumptions that do not align with the lived experiences of their employees, potentially leading to unintended consequences, decreased morale, and stalled AI initiatives.

Myth 1: "Our Employees Feel Safe Speaking Up About AI Problems."

A cornerstone of responsible AI deployment is the ability for employees to report issues, especially those concerning biased or erroneous AI outputs. HR and compliance leaders typically focus on robust governance frameworks and stringent data privacy measures to mitigate AI-related risks. This focus is crucial, but the survey indicates a critical oversight: the assumption that employees will comfortably report AI malfunctions without fear of repercussions.

The report reveals a stark contrast between executive perception and employee sentiment. A staggering 90% of executives surveyed believe that their employees feel secure in reporting unethical AI behaviors without any risk of reprisal. However, the reality on the ground is far more precarious. The study found that 28% of employees have already encountered an AI tool producing a result that was dangerously inaccurate, ethically questionable, or demonstrably biased. Despite witnessing these critical failures, a significant 30% of these employees explicitly stated they would not feel safe reporting such incidents due to a prevalent fear of retaliation.

This disparity underscores a critical gap in trust and communication. While leadership may believe they have established a safe reporting environment, employees’ experiences suggest otherwise. The potential for AI to perpetuate or even amplify existing societal biases means that unchecked reporting channels can lead to systemic issues being overlooked, potentially causing reputational damage, legal liabilities, and a erosion of employee trust. The implications for HR are profound: they must actively foster a culture where speaking out is not only permitted but actively encouraged and protected, moving beyond mere policy statements to demonstrable actions.

Myth 2: "Gen Z Will Figure Out AI. They’re Digital Natives."

A common heuristic in AI adoption planning is the assumption that younger generations, particularly Gen Z, will effortlessly integrate AI into their workflows due to their inherent familiarity with digital technologies. This notion of "digital nativity" often leads to less emphasis on providing tailored training and support for these demographics. However, the Writer and Workplace Intelligence survey data paints a more complex and concerning picture.

The research indicates that Gen Z employees are, in fact, the most likely cohort to actively resist or undermine their company’s AI strategy. This resistance is not merely passive avoidance; 44% of Gen Z workers admitted to sabotaging AI tools in at least one manner, a figure significantly higher than the overall employee average of 29%. The methods of sabotage are varied and concerning, including intentionally generating low-quality outputs to discredit AI’s effectiveness or manipulating performance metrics to present a skewed view of AI’s impact.

The motivations behind this resistance are deeply rooted in career anxieties. Thirty percent of Gen Z respondents cited a fear of AI taking over their jobs as a primary reason for their actions. An additional 26% felt that AI had diminished their perceived value or stifled their creativity. For early-career professionals whose professional identity is often built around skill acquisition and innovative contributions, the perceived threat of AI becoming a job replacement or a devaluer of their unique talents is a potent source of apprehension. HR leaders must recognize that "digital native" status does not automatically translate to AI proficiency or acceptance; instead, it may necessitate a more nuanced approach that addresses anxieties about job security and the preservation of human creativity and judgment.

Myth 3: "Our Managers Are Handling Adoption."

The middle management layer is often envisioned as the crucial bridge between high-level AI strategies and their practical implementation by frontline employees. The expectation is that managers will champion AI initiatives, guide their teams, and translate executive directives into actionable tasks. However, the survey results suggest that this crucial role is, for the most part, not being effectively fulfilled.

Data from the report indicates that a mere 35% of employees view their manager as an active "AI champion." A substantial portion, nearly 60%, perceive their managers as being open to AI but offering minimal guidance, leaving employees to navigate the complexities largely on their own. More alarmingly, 55% of employees—a figure that escalates to 64% among Gen Z—believe they possess a greater understanding of how to utilize AI for their job functions than their direct managers do.

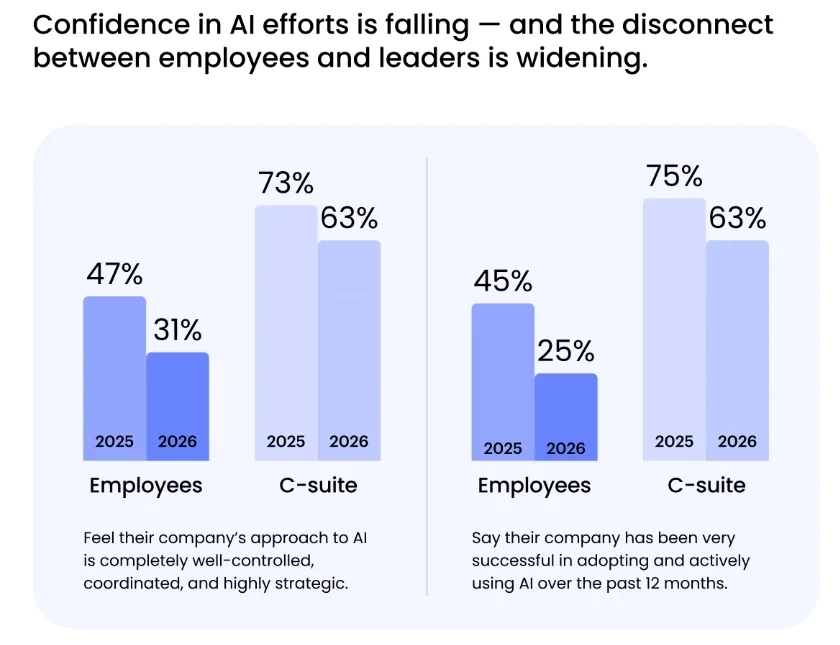

This knowledge and confidence deficit at the managerial level has tangible consequences. Managers serve as the linchpin for transforming strategic intentions into daily operational realities. When they lack the necessary expertise and self-assurance, the chasm between what executives communicate and what employees actually do widens considerably. The report substantiates this by highlighting a decline in employee confidence regarding their company’s AI strategy, which has fallen from 47% in 2025 to just 31% in 2026. Furthermore, the managerial gap contributes to a broader trust issue: 75% of employees stated they would trust AI over their manager for at least one specific task, indicating a significant erosion of managerial authority and perceived competence in the context of AI. HR departments must therefore invest heavily in upskilling managers, equipping them with the knowledge and confidence to effectively lead AI integration.

Myth 4: "We Have an AI Strategy, So Adoption Programs Will Work."

Organizations have invested considerable effort in developing AI strategies, focusing on upskilling the workforce and strategic planning around new technological paradigms. The survey’s findings on this front are particularly disheartening, suggesting that many AI strategies are more about external perception than internal utility.

A striking 75% of C-suite respondents confessed that their company’s AI strategy is "more for show" than for genuine internal guidance. The primary drivers for developing these strategies appear to be public relations and investor relations, rather than a concrete plan for internal transformation. This is further underscored by the admission from 39% of these executives that they lack a formal roadmap for generating revenue from AI tools. Paradoxically, despite this foundational deficiency, nearly 70% of these same companies are already proceeding with layoffs that are directly linked to AI implementation.

This revelation points to a concerning trend where AI is being adopted as a corporate talking point and a cost-reduction mechanism, without a well-defined operational strategy or a clear plan for maximizing its value. The lack of genuine strategic intent means that adoption programs, even if well-intentioned, are likely to falter. The disconnect between the perceived importance of AI for external stakeholders and the absence of internal strategic depth creates an environment where layoffs may occur without a clear understanding of how AI is intended to enhance productivity or create new opportunities. HR leaders face the challenge of advocating for authentic, actionable AI strategies that are grounded in business objectives and supported by comprehensive internal plans, rather than superficial pronouncements.

Myth 5: "Our Performance System Can Handle This."

The advent of AI is creating a bifurcated workforce, with a segment of "AI super-users" experiencing significantly amplified productivity and career advancement, while others lag behind. The report indicates that AI super-users save nearly nine hours per week, compared to just two hours for basic users. Furthermore, these super-users are approximately three times more likely to have received both a promotion and a pay raise in the past year.

This disparity is not going unnoticed by employees. A substantial 43% of employees report being expected to handle the workload of more than one person due to AI-driven productivity gains. Concurrently, 95% of C-suite executives acknowledge that roles and organizational structures are already undergoing significant transformation as a direct result of AI. The research dubs this phenomenon a "two-tiered workforce," where the C-suite is actively cultivating and rewarding these high-performing AI adopters.

The implications for those who cannot or will not adapt are stark. Researchers from the study suggest that individuals who do not embrace AI risk being left behind. This prediction is reinforced by the statistic that 60% of surveyed executives are planning layoffs for employees who "can’t or won’t use AI." This creates an urgent need for performance management systems that can accurately assess AI proficiency, reward effective utilization, and provide pathways for upskilling those at risk of falling into the lower tier. HR departments must proactively re-evaluate performance metrics, career development frameworks, and severance policies to address the emerging realities of an AI-augmented workforce, ensuring that the transition is managed equitably and strategically.

The findings from the "2026 AI Adoption in the Enterprise" report serve as a critical wake-up call for organizations. The prevalent "AI adoption myths" highlight a significant disconnect between executive optimism and the nuanced realities experienced by employees. For HR leaders, navigating this landscape requires a shift from broad assumptions to targeted, empathetic, and data-driven strategies. Addressing employee fears, empowering managers, fostering genuine strategic integration, and adapting performance systems are not merely optional enhancements but essential steps to ensure that AI adoption leads to sustainable growth and a more engaged, productive workforce. The future of work, as shaped by AI, demands a more grounded and human-centric approach to its integration.