The rapid integration of generative artificial intelligence into the corporate landscape has fundamentally altered the methodology by which Learning and Development (L&D) professionals access, curate, and deploy organizational knowledge. As the industry pivots from traditional search-engine-based research to direct interaction with Large Language Models (LLMs), the ability to "ask" AI questions has emerged as a critical strategic competency. This shift represents a move away from keyword-based indexing toward complex, context-aware prompt engineering, where the quality of the output is directly proportional to the structural integrity of the inquiry.

The Evolution of Knowledge Retrieval in Corporate Training

For decades, the standard workflow for an Instructional Designer involved scouring internal databases and external search engines to synthesize information into learning modules. This process was often labor-intensive, requiring hours of manual filtering. With the advent of sophisticated AI assistants like OpenAI’s ChatGPT, Anthropic’s Claude, and Google’s Gemini, the retrieval process has become instantaneous, yet more cognitively demanding in terms of how requests are framed.

Industry data suggests a significant shift in workplace behavior. According to the 2024 Work Trend Index by Microsoft and LinkedIn, 75% of global knowledge workers now use AI at work. However, a "prompting gap" has emerged, where users who lack a structured approach to AI interaction often receive generic, surface-level, or occasionally inaccurate responses. In the context of L&D, where accuracy and pedagogical soundness are paramount, the stakes of suboptimal AI usage are particularly high.

Understanding the Mechanics of AI Responses

To effectively leverage AI, professionals must first understand that these systems do not "know" facts in the human sense. Instead, they function as sophisticated prediction engines that generate the most probable sequence of words based on patterns in their training data. When an L&D professional asks a vague question, the AI is forced to make assumptions to fill the gaps.

A primary challenge identified by technologists is the phenomenon of "hallucination," where the AI provides a confident but factually incorrect answer. This often occurs when the prompt lacks specific constraints or context. Consequently, the transition from simple questioning to "structured prompting" has become the new standard for high-performance L&D teams. By providing the AI with a persona, a clear objective, and a defined format, users can minimize the risk of error and maximize the utility of the generated content.

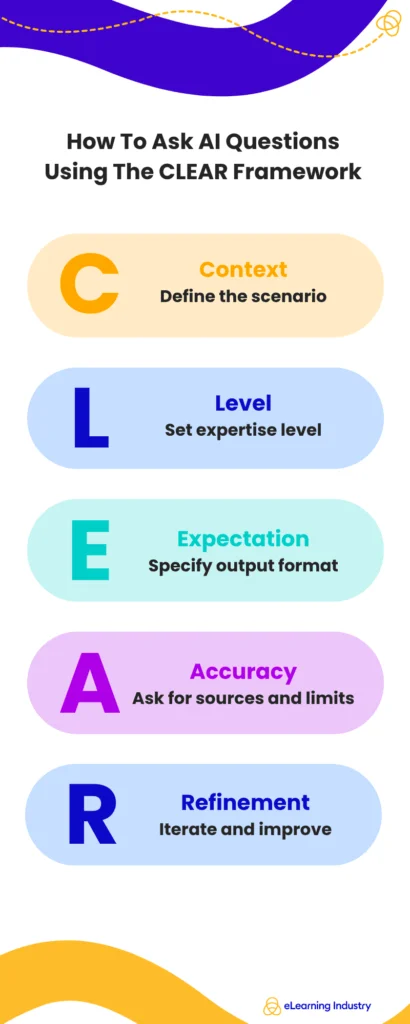

The CLEAR Framework: A Systematic Approach to Prompting

To standardize the quality of AI interactions, industry experts have developed various frameworks, with the CLEAR framework gaining significant traction among instructional designers. This methodology provides a repeatable blueprint for generating high-value outputs.

Context: Establishing the Scenario

Context is the foundation of any effective prompt. Instead of requesting a generic "training outline," an effective practitioner defines the specific environment. For instance, providing details such as "a SaaS company experiencing 40% year-over-year growth with a remote-first sales team" allows the AI to tailor its response to specific organizational pressures and cultural nuances.

Level: Specifying Expertise and Persona

The "Level" component dictates the complexity and tone of the response. AI can shift its output to match the perspective of a junior employee, a subject matter expert, or a senior executive. By instructing the AI to "respond as a Chief Learning Officer with 20 years of experience in enterprise digital transformation," the user ensures the advice aligns with strategic business goals rather than just tactical execution.

Expectation: Defining the Output Format

One of the most common errors in AI usage is failing to specify the desired format. L&D professionals can save significant time by requesting outputs in specific structures, such as a 5-column table, a markdown-formatted syllabus, or a series of JSON-coded quiz questions for an LMS (Learning Management System).

Accuracy: Implementing Constraints and Validation

To mitigate the risk of misinformation, prompts should include requirements for the AI to state its assumptions or highlight areas where it lacks data. Asking the AI to "provide three counter-arguments to this training approach" or "cite the theoretical frameworks used in this design" forces the system to move beyond superficiality and provides a layer of validation for the human user.

Refinement: The Iterative Conversation

The most successful AI users treat the tool as a collaborative partner rather than a vending machine. The first response is rarely the final product. Through iterative prompting—asking the AI to "shorten the third paragraph," "make the tone more empathetic," or "add a case study relevant to the healthcare sector"—the user polishes the output to meet professional standards.

Comparative Analysis: Weak vs. Strong Prompting in L&D

The difference in outcomes between casual use and professional prompt engineering is stark. A weak prompt, such as "Give me ideas for leadership training," typically results in a list of clichés like "communication skills" and "time management."

In contrast, a strong, structured prompt might read: "Suggest five microlearning modules for mid-level managers in the manufacturing sector focusing on de-escalating floor-level conflicts. Each module must include a 2-minute video script outline and three scenario-based assessment questions." This level of precision allows the AI to act as a force multiplier, reducing the initial drafting phase of course development by an estimated 60% to 70%.

The Tool Landscape: Selecting the Right Engine for the Task

The market for AI tools has diversified, offering L&D professionals various specialized options:

- General Assistants (ChatGPT, Claude): Best for creative brainstorming, content drafting, and role-playing scenarios.

- Research-Focused Tools (Perplexity AI, Consensus): These tools prioritize source attribution and are essential for verifying the scientific or legal basis of training content.

- Enterprise Copilots (Microsoft 365 Copilot, Google Workspace AI): These integrate directly with internal company data, allowing L&D teams to ask questions about their own proprietary policies or past training records securely.

The choice between free and paid versions often comes down to data security and processing power. While free versions are suitable for exploratory tasks, paid enterprise tiers are generally required for handling sensitive company information and accessing the most advanced reasoning models (such as GPT-4o or Claude 3.5 Sonnet).

Broader Impact on the L&D Profession

The integration of AI questioning into the L&D workflow is not merely a technical upgrade; it is a shift in the value proposition of the instructional designer. As AI takes over the "heavy lifting" of content generation and data summarization, the human professional’s role shifts toward that of an architect and editor.

Strategic decision-making becomes the primary focus. L&D leaders are now tasked with evaluating the pedagogical integrity of AI-generated content and ensuring it aligns with the broader business strategy. Furthermore, the ability to scale personalized learning has become a reality. By using refined prompts, L&D teams can quickly adapt a single core curriculum into dozens of variations tailored to different languages, job roles, and regional regulations.

Challenges and Ethical Implications

Despite the efficiencies, the transition to AI-driven inquiry is not without risks. Privacy remains a top concern for corporate legal departments. Inputting proprietary company data into public AI models can lead to data leaks, as these models often use user inputs for further training. Consequently, many organizations are now implementing strict "AI Acceptable Use Policies" and investing in private, "walled garden" AI environments.

Moreover, there is the risk of "cognitive offloading," where professionals may become over-reliant on AI, leading to a decline in critical thinking or a loss of the "human touch" in training. Industry analysts emphasize that AI should be viewed as a "co-pilot," not an autopilot. The final accountability for the accuracy and impact of a learning program remains with the human designer.

Conclusion and Future Outlook

The mastery of AI questioning marks a new era in corporate education. As the technology continues to evolve toward "agentic AI"—systems that can not only answer questions but also execute multi-step tasks—the importance of clear, logical, and contextual communication will only increase.

For L&D professionals, the path forward involves continuous upskilling in prompt engineering and AI literacy. Those who can effectively bridge the gap between human intent and machine execution will be the ones to lead their organizations through the next phase of the digital transformation. By moving beyond simple queries and adopting structured frameworks like CLEAR, the L&D community can transform AI from a novelty into a powerful engine for organizational growth and learner success.