The proliferation of Artificial Intelligence (AI) agents within Human Resources (HR) departments has ushered in an era of unprecedented efficiency and automation. However, this technological leap has inadvertently created a significant operational and legal blind spot: the absence of AI agents in the fundamental systems that govern the workforce. This oversight means that critical HR actions, from initial candidate screening to time-off approvals and performance flagging, are being executed by non-human actors whose involvement is largely undocumented within traditional HR Information Systems (HRIS). This article delves into the architectural limitations of current HRIS platforms, the critical gaps in visibility, auditability, and authority that emerge when AI agents are deployed, and outlines a pragmatic framework for Chief Human Resources Officers (CHROs) to address this emerging challenge, ensuring compliance and mitigating substantial legal exposure.

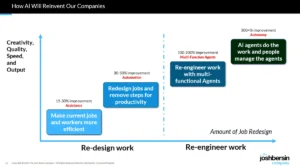

The current discourse surrounding AI in HR, while acknowledging its transformative potential, has largely focused on the conceptual shifts and the evolution of automation from task-level to full process execution. As highlighted by Josh Bersin’s February 2026 piece in this publication, "From Copilots to Super Agents: HR’s 2026 Shift," the operational model of HR is undergoing a profound rewrite. Concurrently, Peter Cappelli’s challenge to the notion of treating AI agents as fellow employees in his January 2026 article, "The Fallacy of Treating AI Agents as Fellow Employees," underscores the critical need for supervision and accountability. While both perspectives are valid, they touch upon a more fundamental architectural problem: most enterprise HRIS platforms were not designed to accommodate or audit workflows involving non-human workers. This gap is not merely a technical inconvenience; it represents a growing legal and governance liability for organizations.

The Legacy Foundation: HRIS Built on the Employment Relationship

The bedrock upon which every HRIS platform has been constructed over the past four decades is the singular concept of the "employment relationship." This is not merely a software design choice but a deeply embedded legal and regulatory construct. An employment relationship is defined by a legal start date, a structured compensation framework, a clear reporting hierarchy, adherence to protected-class obligations, and a meticulously maintained audit trail that HR is legally mandated to preserve. Payroll, performance management, and succession planning all operate on this foundational assumption of a human worker with a defined legal tie to the organization.

AI agents, by their very nature, do not possess this employment relationship. They are not employees or contractors. They do not receive a W-2 or a 1099, hold a position within the organizational job architecture, or have a manager of record. Yet, they are increasingly performing tasks that were historically the purview of human employees, creating a disconnect between the systems designed to manage the workforce and the evolving reality of how work is being done. This disparity represents what can be termed HR’s "conceptual debt." For decades, workforce management systems have operated under the implicit assumption that "worker" equates to "person with a legal relationship to this organization." This assumption, while accurate for its time, has been outpaced by technological advancement, leaving systems fundamentally incomplete in the face of AI integration.

The Three Critical Gaps Unveiled by AI Deployment

The integration of AI agents into core HR processes immediately exposes three distinct and significant gaps within existing HRIS frameworks. These gaps, observed firsthand across various organizational implementations, pose immediate challenges to operational integrity and legal compliance.

The Visibility Gap: The Unseen Workforce

A primary challenge is the profound lack of visibility into the AI workforce. In most HRIS platforms, there is no centralized, easily accessible repository that details which AI agents exist, who is responsible for their deployment and oversight, and precisely which HR processes they are interacting with. Typically, AI agents are provisioned by IT departments as service accounts, operating in a technical shadow. HR departments often discover their existence and operational scope only after an issue arises, leading to a reactive rather than proactive management approach. This lack of a clear inventory makes it exceedingly difficult for HR to understand the full landscape of its automated operations.

The Audit Gap: The Missing Record of Action

The audit gap is perhaps the most pressing concern from a legal and compliance perspective. When an AI agent performs a critical HR function – such as screening a candidate’s resume, approving a time-off request, or routing an HR service case – the HRIS often records the outcome but not the specific actor. A candidate might be advanced to the next stage of the hiring process, a leave request approved, or a service case closed. The system registers a result, but crucially, it fails to indicate that a non-human process generated that outcome. This absence of a clear audit trail creates significant legal exposure. In the event of a dispute or investigation, organizations may be unable to demonstrate the provenance of a decision, potentially leading to allegations of bias, unfairness, or procedural impropriety.

The Authority Gap: The Broken Chain of Command

Traditional workforce management relies on well-defined delegation chains, meticulously captured and maintained within the HRIS. Authority flows from senior leadership down through layers of management, providing a clear line of accountability. When an issue arises, HR can trace the decision-making process back to the responsible individual who delegated specific responsibilities. AI agents fundamentally disrupt this established order. When an agent screens out a candidate based on a skills-matching algorithm, it is not acting under a delegation of authority that HR has formally approved or documented. Instead, legal accountability for that decision, however unintended, flows upward to HR. The actual decision-making process has followed a path outside of HR’s direct oversight and control. This phenomenon, termed the "reverse delegation problem," is unprecedented in HR’s history and lacks any historical precedent for resolution.

A Three-Step Framework for CHROs: Reclaiming Governance

Addressing the challenges posed by the invisible AI workforce is not merely a technical undertaking for IT departments; it is a critical organizational governance issue that falls squarely within the purview of the CHRO. A strategic, multi-pronged approach is necessary to re-establish control and mitigate risk.

Step 1: Formalize the "Agent Workforce" Inventory

The foundational step is to acknowledge and formally document the existence of AI agents as legitimate workforce entities, not just as IT service accounts. This requires building or mandating an inventory that treats each AI agent performing work within HR processes as a distinct workforce component. This inventory should detail:

- Functionality: What specific tasks does the agent perform?

- Ownership: Who is the human owner or sponsor responsible for this agent?

- Process Interaction: Which HR processes does the agent touch?

- Data Access: What data is the agent permitted to access, and under what conditions?

While this inventory does not need to reside within the HRIS from day one, it must be owned and managed by HR, not solely by IT. Starting with a robust spreadsheet or a dedicated HR-managed database is a practical initial step, ensuring HR has a clear understanding of its automated operational landscape.

Step 2: Proactive Audit Trail Construction

The construction of comprehensive audit trails must precede, not follow, the occurrence of problems. Every action taken by an AI agent within an HR process needs to be meticulously logged within the system of record. This log should capture:

- The Agent: Which specific AI agent performed the action?

- The Record: On which specific employee or candidate record was the action performed?

- Inputs: What data or parameters were used as input for the agent’s decision?

- Outcome: What was the result of the agent’s action?

- Human Oversight: Was the outcome reviewed or modified by a human, and if so, by whom and when?

This architectural requirement must be clearly articulated to internal HR technology teams and external HR technology vendors. The ability to extract this complete audit trail directly from the HRIS, rather than relying on fragmented vendor dashboards or server logs, is paramount for legal defensibility.

Step 3: Clearly Defined Authority Lines

Establishing clear lines of authority for AI agents is a critical governance task. Every agent operating within HR processes must have a designated human owner and a precisely documented scope of authority. This includes defining:

- Independent Decisions: Which decisions can the agent make autonomously?

- Human Review Thresholds: What types of decisions require mandatory human review or approval?

- Escalation Triggers: Under what circumstances should an agent’s action or decision be escalated to a human for intervention?

This governing structure is largely absent in most organizations today. Its development and implementation are the responsibility of the CHRO, focusing on workforce governance principles rather than purely technical system engineering.

Demands for 2026 Contract Renewals: Shifting Vendor Accountability

As most enterprise HR technology contracts are up for renewal in 2026, CHROs and HRIS leaders have a critical opportunity to influence vendor roadmaps and secure essential capabilities. Key requirements to place on the table include:

- Agent Registration Capability: The HRIS platform must be capable of registering and managing AI agents as distinct workforce entities, on par with human employees. Vendors like Workday, with their forthcoming Agent System of Record feature, are moving in this direction. A thorough assessment of each vendor’s roadmap in this area is essential.

- AI Action Logging: The platform must capture AI agent actions directly within the system of record, not in separate analytical tools. This ensures that HR and legal teams have on-demand access to comprehensive audit trails.

- Transparent Controls: HR must have the ability to define granular access controls for AI agents, specifying the data they can access, the sensitivity levels, and geographical limitations. Crucially, these permissions should be changeable or revocable without requiring IT intervention through a service ticket.

- Comprehensive Audit Export: The HRIS should facilitate the seamless export of complete logs detailing every AI-involved decision within a specified period. This data must be in a format that legal counsel can readily utilize, eliminating reliance on vendor support teams for its generation.

Vendors who can demonstrate working features that meet these requirements should be prioritized for contract renewals. Those who can only offer theoretical roadmaps presented in slide decks warrant careful scrutiny and may need to be placed on a vendor watch list.

The Org Chart as a Legal Document: Navigating the Future of HR Governance

Regulators, courts, and plaintiff attorneys view the organizational chart as a critical document that elucidates the authority structures underpinning challenged employment decisions. As evidenced by ongoing discussions regarding the strategic authority of CHROs, HR’s influence is directly proportional to the structures it demonstrably controls.

The proliferation of AI agents is creating a "ghost org chart" – a parallel structure of decision-making that accumulates legal exposure outside of any controlled framework. The core problem is not a deficiency in technology or platform maturity per se, but rather the fundamental disconnect between AI agents performing real employment-related work and the systems of record that fail to document this activity.

Organizations that proactively address this issue will be best positioned to close the critical gaps in visibility, auditability, and authority. They will leverage upcoming contract renewals to hold vendors accountable for delivering the necessary infrastructure and will treat AI agent governance as an integral component of overall workforce governance, firmly anchored within HR’s domain, not relegated to IT. This strategic foresight is essential for navigating the evolving landscape of HR and safeguarding the organization against emerging legal and operational risks.